* [de] BITTE BEACHTEN SIE:

Sie verwenden einen veralteten Browser. Es ist unsicher und nicht mehr für moderne Webstandards geeignet.

Bitte aktualisieren (oder ändern) Sie Ihren Browser, um unsere Website so anzuzeigen, wie sie angezeigt werden soll.

Wir empfehlen die Browser 'Safari', 'Firefox' oder 'Brave'.

DANKE

—Klicken Sie, um diese Nachricht zu schließen—

* [pl] UWAGA:

Używasz przestarzałej przeglądarki. Nie jest ona bezpieczna i nie odpowiada nowoczesnym standardom internetowym.

Zaktualizuj (lub zmień) swoją przeglądarkę, aby wyświetlać naszą stronę w sposób, w jaki powinna być widoczna.

Polecamy przeglądarki „Safari”, „Firefox” lub „Brave”.

DZIĘKUJEMY

—Kliknij, aby zamknąć tę wiadomość—

* [en] PLEASE NOTE:

You are using an outdated browser. It is unsafe and no longer suitable for modern web standards.

Please, update (or change) your browser to view our site as it is intended to be seen.

We recommend 'Safari', 'Firefox' or 'Brave' browsers.

THANK YOU

—Click to dismiss this message—

Security News

Security News

Security News

Security News

On this page

you get important news and warnings about security and privacy on internet!

(Be patient – loading of this page takes few seconds.)

On this page, I give you the latest news, warnings and advice on the subject of security and privacy on the internet. You alone can take care of your own security and privacy and this requires some knowledge, strategy and constant vigilance.

(On the PRIVACY POLICY page, you will find my recommendations for a broad strategy to protect your computer from hackers.)

DISCLAIMER:

- Copyrights belong to each article's respective author.

- Article links lead to external websites, where you will be tracked, most likely.

1. 1 PASSWORD Manager Appby Agilebits SoftwareA range of topics on security, focused on password management.

2026-06-02, 00:00

Zero-Shot Learning is a podcast about how AI gets built, secured, and deployed. Hosted by Nancy Wang, 1Password CTO, and Dev Tagare, Senior Director of Engineering at Google, it's a builder's view of the architecture and the complex choices it takes to ship with AI.

As Chief Product Officer at Vercel, Tom Occhino joined Zero-Shot Learning to discuss how AI is reshaping the developer workflow, from frontend architecture to v0, Vercel's production-ready AI coding assistant. What started as a conversation about AI-assisted development became a case for access control as a design decision, not a security afterthought.

How AI changes the developer security model

As part of the team that built and shipped React at Facebook, Tom helped replace MVC patterns with a component-based model that changed how an entire generation of engineers reasoned about interfaces. He calls what's happening now with AI-assisted development "a fundamentally different approach to software."

Where the earlier shift changed how developers organized their thinking, this one changes who or what creates and operates software. In the past, a developer working on component architecture brought years of professional judgment to those decisions. Today, a non-technical worker using an agent in that same workflow does not, and when that agent can call tools, the gap can't be covered by training. Authorization has to be built into the architecture.

Vercel's AI SDK makes it easier for agents to call tools, which adds to its appeal, but also means it requires stronger safeguards. "Putting on my security hat," Nancy said, "how do you make sure that these agents don't get exploited?"

"Under no circumstances are we encouraging code execution on the client," Tom replied.

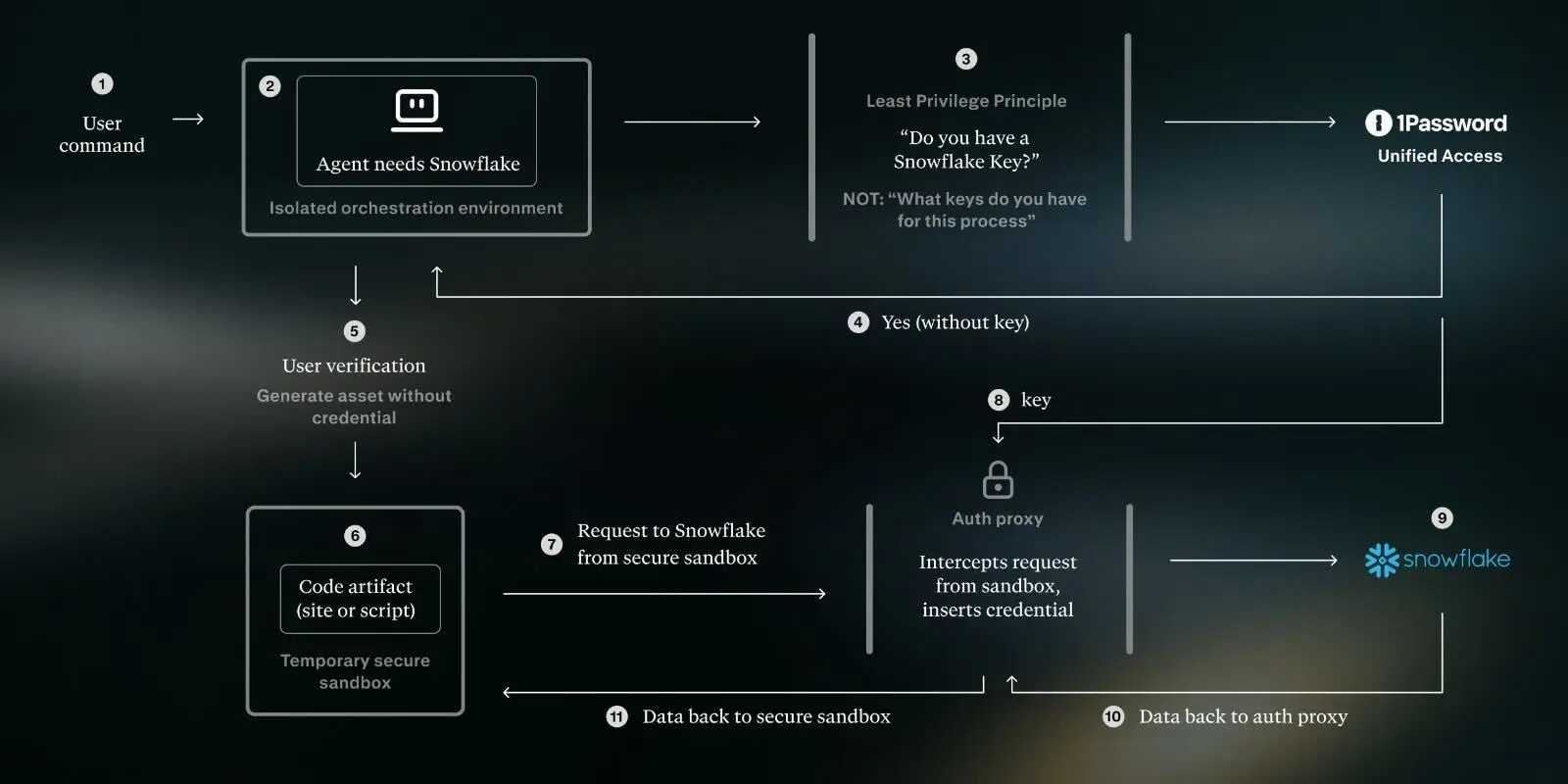

Vercel builtSandbox because agent-driven development requires an environment without access to production secrets, environment variables, or configuration, so untrusted code doesn’t touch production by default. Sandbox limits what an agent can read or modify locally.

Outbound access needs authZ policy too. "There are outgoing requests that come from that sandbox," Tom said. "Who are they allowed to talk to, and in what capacity?"

Tom drew each boundary deliberately, inbound and outbound, before anything shipped. An agent that can't read your production secrets can still make outbound calls to wherever it chooses. One boundary without the other still leaves the agent free to act where it shouldn't.

When everyone builds, access must be secure by default

To secure the new group of people who can build with AI, products must be secure by default.

Especially as you open access to these tools to many more people who lack the security fundamentals from the first 15 or 20 years of their career," Tom said, "we need to be creating systems that are secure by default and safe by default."

Imagine that a product manager wants to track customer health without waiting on the analytics team and builds a dashboard overnight using an AI-assisted coding platform. The AI pulls account data from Salesforce, usage metrics from Mixpanel, and support ticket volume from Zendesk. To make it work quickly, the PM pastes API keys and account tokens directly into the app. Those credentials carry the PM's full permissions across all three platforms, including access to customer records the dashboard will never need. They share the link with their team, and suddenly several people are querying live customer data through an app nobody in security knows exists, usingcredentials that won't expire, with an agent that can't be attributed to individual users, and that has no revocation path if the PM leaves the company.

"We need that untrusted code execution environment that does not have access to production secrets," Tom said. In our example, the PM's dashboard is what it looks like when permissions are inherited by default.

It's an open area of research, Tom acknowledged, and one 1Password is already working through.

How 1Password makes the secure path the easy path

"You've got to make the paved path the easy path, because if security gets hard, it risks becoming an afterthought,” Nancy said.

“Make the secure way the easy way” is the design logic Tom applied to Vercel Sandbox, understanding that if the secure option requires extra steps, most developers won't take them.

The insecure way is already documented in many codebases. An SSH key is a plain-text file on a developer terminal. API tokens are hardcoded into scripts. Environment variables are inherited by anything running in that environment with no encryption, access controls, or audit trail. Just a file.

1Password Unified Access serves as the authorization layer between the agent and the systems it connects to. Credentials move from vault to runtime without passing through a file, a config, or a clipboard, and are evaluated in context when access is requested, not carried over from setup. The shift from always-on access that developers must manually provision to just-in-time authorization is where the agent gets only what it needs for the task at hand and nothing more. There are no keys to rotate, no authorization to revoke, and nothing to explain to a security team after the fact. It’s a change that fundamentally reduces risk and manual effort from developer workflows.

Vercel's integration with 1Password brings agentic access control directly into the cloud sandbox environment that Tom described. An agent calling tools through Vercel's AI SDK needs credentials to do useful work. Those credentials don't have to be long-lived or broadly scoped. They don't have to live in the agent's context at all.

Tom calls Vercel's platform strategy "the operating system of agents." The authorization decisions made at the design stage become the authorization model that everyone using the product inherits.

Designing access control for agents and AI-assistants

In "We solved the blank canvas problem," Tom joined Zero-Shot Learning to talk about generating ideas faster with AI, and the conversation arrived at why that requires designing authorization from the start. Access control has always been a requirement; what's changing is when in the process it gets built.

Watch the full episode with Vercel’s Tom Occhino

Tom Occhino joined Nancy Wang and Dev Tagare on Zero-Shot Learning, 1Password's podcast on agentic AI and the people building it.

Watch now2026-05-29, 00:00

May was A&PI Heritage Month, and at 1Password, we're proud to shine a light on the people who bring these perspectives to life in our work and help shape our culture every day.

This year, we decided to spotlight Stephanie Cheng, Senior Customer Trainer and a leader within our A&PI Employee Resource Group. With over five years at 1Password, Stephanie has built her career around helping people feel capable, confident, and supported, whether she's onboarding a new customer or creating space for her colleagues to connect and belong. We sat down with her to learn more about her approach to customer education, the community she helps lead, and what A&PI Heritage Month means to her.

You’ve spent five and a half years training thousands of customers on something they rely on every day to stay secure. What has that experience taught you about people, about learning, and about what it really takes for something to click?

One thing this experience has taught me is that learning really happens when people feel comfortable enough to ask questions and admit what they don’t know. Especially in security, people can feel intimidated or worried about “doing it wrong,” so a big part of my role became creating an environment where people felt supported rather than overwhelmed. It’s taught me that what makes something “click” usually isn’t just the technical explanation; it’s helping people understand why it matters in the context of their own work and daily habits. The most effective training moments happen when customers can connect the product back to something tangible in their world. Over time, I’ve also learned that good training is less about presenting information and more about listening. Every customer approaches technology differently, and being able to adapt to different learning styles, technical comfort levels, and goals has been one of the most rewarding parts of the job.

Is there a customer interaction or training moment that has stuck with you? Something that surprised you or shifted how you approach your work?

One interaction that has always stayed with me happened during my first year on the team.

After one of my training sessions, a customer actually reached out to their Account Executive to ask for my manager’s email so they could share positive feedback directly with her and senior leadership, including our CRO. I was pleasantly surprised at the time because I was still relatively new in my role, and I hadn’t realized how much of an impact those sessions could have on someone’s experience.

As we later introduced surveys and more formal feedback channels, I continued to receive positive comments from customers mentioning me by name. What stood out most wasn’t just the feedback itself, but realizing that customers remembered how the training made them feel. They felt more confident, supported, and comfortable using something that was important to their day-to-day work and security.

That experience really shaped how I approach customer education. It taught me that effective training isn’t just about transferring knowledge; it’s about building trust, creating a supportive environment, and helping people feel empowered rather than intimidated. That mindset has stayed with me since.

As part of the A&PI leadership team, how has the experience stretched you? What’s a skill or perspective you’ve developed that you wouldn’t have otherwise?

Being part of the A&PI leadership team has stretched me in ways that are very different from my day-to-day role. It gave me the opportunity to think more intentionally about community-building, engagement, and creating experiences that bring people together in meaningful ways.

One thing I’ve developed is a stronger understanding of how much thought and coordination go into building inclusive spaces. Whether it’s organizing events, coordinating speakers, or creating opportunities for people to connect socially, I’ve learned how important it is to create experiences that feel approachable and welcoming for a wide range of people.

It’s also helped me grow my leadership skills outside of formal authority. A lot of ERG work happens through collaboration, initiative, and shared ownership, and I’ve learned how impactful thoughtful planning and consistency can be in building trust and engagement over time.

You’ve played a big role in bringing the A&PI community to life – from Lunar New Year ramp cards to monthly socials. What has building that community meant to you personally? And what have you seen it make possible for others?

Building the A&PI community has been incredibly meaningful because it created opportunities for people to feel seen, connected, and celebrated in ways that go beyond day-to-day work.

One thing I’ve loved most is seeing how different types of events create different entry points for connection. Some people joined our monthly socials where we’d talk through relatable topics or do creative activities like building seasonal mood boards together for Thanksgiving or Spring. Others signed up for CliftonStrength assessments, watercolour classes, or purchased A&PI-authored books that they later shared back with the group. I also really valued being able to support Asian-owned local businesses through some of our initiatives. It made the celebrations feel more connected to the broader community outside of work as well.

Those moments helped create opportunities for people to connect in ways that felt authentic to them, whether that was through conversation, creativity, learning, or cultural celebrations. It reminded me that community isn’t built through one big event – it’s built through consistent, thoughtful experiences that make people feel welcomed and included over time. It is so exciting to have returning members join monthly socials, and to see new faces show up and continue to stay engaged. Even though we are all working virtually, the sense of community really brings everyone closer together!

What does it mean to you to be celebrating A&PI Heritage Month at work, specifically? Why does it matter that this happens inside a company and not just outside of it?

Celebrating A&PI Heritage Month within a company matters because it creates intentional space for people to share culture, stories, and experiences that may not otherwise surface in day-to-day work conversations. It also gives others an opportunity to learn, engage, and build connections across different backgrounds in a meaningful way.

Outside of work, I volunteer with Asian Roots Collective, a community that creates spaces centred around familiarity, belonging, and celebrating Asian excellence across sports, arts, food, entertainment and beyond. One of the things I’ve always appreciated most about that experience is seeing how powerful it can be when people feel represented, understood and connected to a community that reflects part of their identity and experiences.

That perspective has shaped how I think about community-building at work as well. We spend such a significant part of our lives in the workplace, so feeling seen and included there has a real impact on belonging and how comfortable people feel showing up as themselves.

Through the A&PI ERG, I want to help create some of that same sense of connection and community that I’ve experienced outside of work - whether that’s through cultural celebrations, creative events, or simply giving people opportunities to gather and feel part of something together. To me, that’s what makes these spaces so meaningful: they help people feel represented, welcomed, and connected beyond just their role or title.

Stephanie's story is a reminder that the most meaningful work, whether it's guiding a customer through a new product or helping a colleague feel seen and celebrated, comes down to the same thing: creating environments where people feel safe, supported, and empowered to show up fully. As we close out our celebration of A&PI Heritage Month, we're grateful for the community Stephanie helps build and for the care she brings to every interaction, on and off the screen.

If you’re curious about building a career at 1Password, we invite you to explore our open roles and learn more about how you can be part of our growing team. https://1password.com/careers

2026-05-29, 00:00

In the constantly evolving world of enterprise tech, there’s one thing that IT and security teams have always been able to count on: users won’t follow policy if they think it’s standing in the way of their productivity.

Case in point: 1Password’s most recent annual report found that 52% of employees have downloaded apps without IT approval. These shadow IT apps typically sit outside a company’s SSO provider, and introduce both unmanaged risk and cost.

That governance gap has become more pressing with the growing adoption of AI tools and agents, which introduce new and worsening threats. This issue was the focus of 1Password’s recent webinar, “The unmanaged stack: Governing SaaS apps and AI tools outside SSO.”

What is the unmanaged stack? It refers to all of the SaaS apps and AI-based tools that can’t be managed by traditional IAM tools, whether that’s due to software constraints or the infamous “SSO tax.”

During the webinar, Evan Sandhu, 1Password Product Marketing Specialist, and Ethan Stoler, Senior Demo Engineer, explored how 1Password’s solutions can help IT and security teams secure and govern these unapproved or unmanaged access points.

Read on for an in-depth recap of the webinar’s key themes.

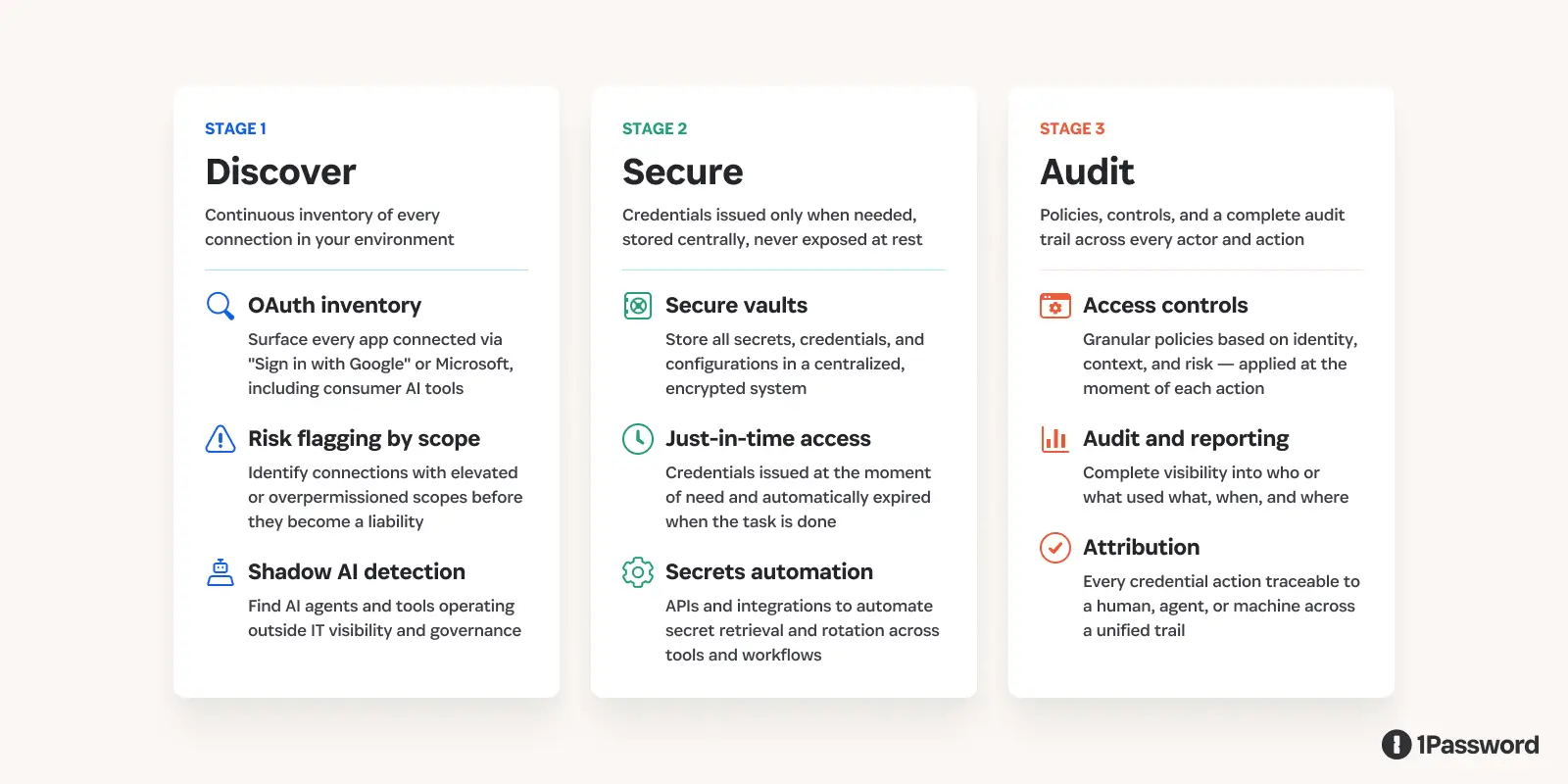

New integration features to manage high-risk SaaS and AI

IT and security teams need solutions to manage those apps that fall outside the purview of SSO. Thankfully, new integrations between 1Password Enterprise Password Manager (EPM) and 1Password SaaS Manager are able to do just that.

“With SaaS Manager integrating with EPM, you can now discover sensitive and shared accounts stored in EPM vaults, surface them for review, and let IT take ownership of them, moving them from end-user management to IT management.”

– Evan Sandhu, The unmanaged stack: Governing SaaS apps and AI tools outside SSO

During the webinar, Evan Sandhu explored how these integration features work across three different categories:

Discover: With Vault Insights, teams can discover sensitive or shared logins across an organization’s EPM vaults. And with Browser Insights (beta) teams can surface login activity from the 1Password browser extension to reveal unapproved app usage.

Review: With an Account Risk Report, teams can review the surfaced accounts and credentials according to their risk level, enabling admins to prioritize remediation.

Govern: With Account Governance, IT and security teams can take over management of any of the discovered high-risk logins for sensitive or shared accounts.

In a live demo of these features, Ethan Stoler showed in real time how quickly this integration can surface various credential risks for critical applications like GitHub, and how simple it is for admins to govern those risks.

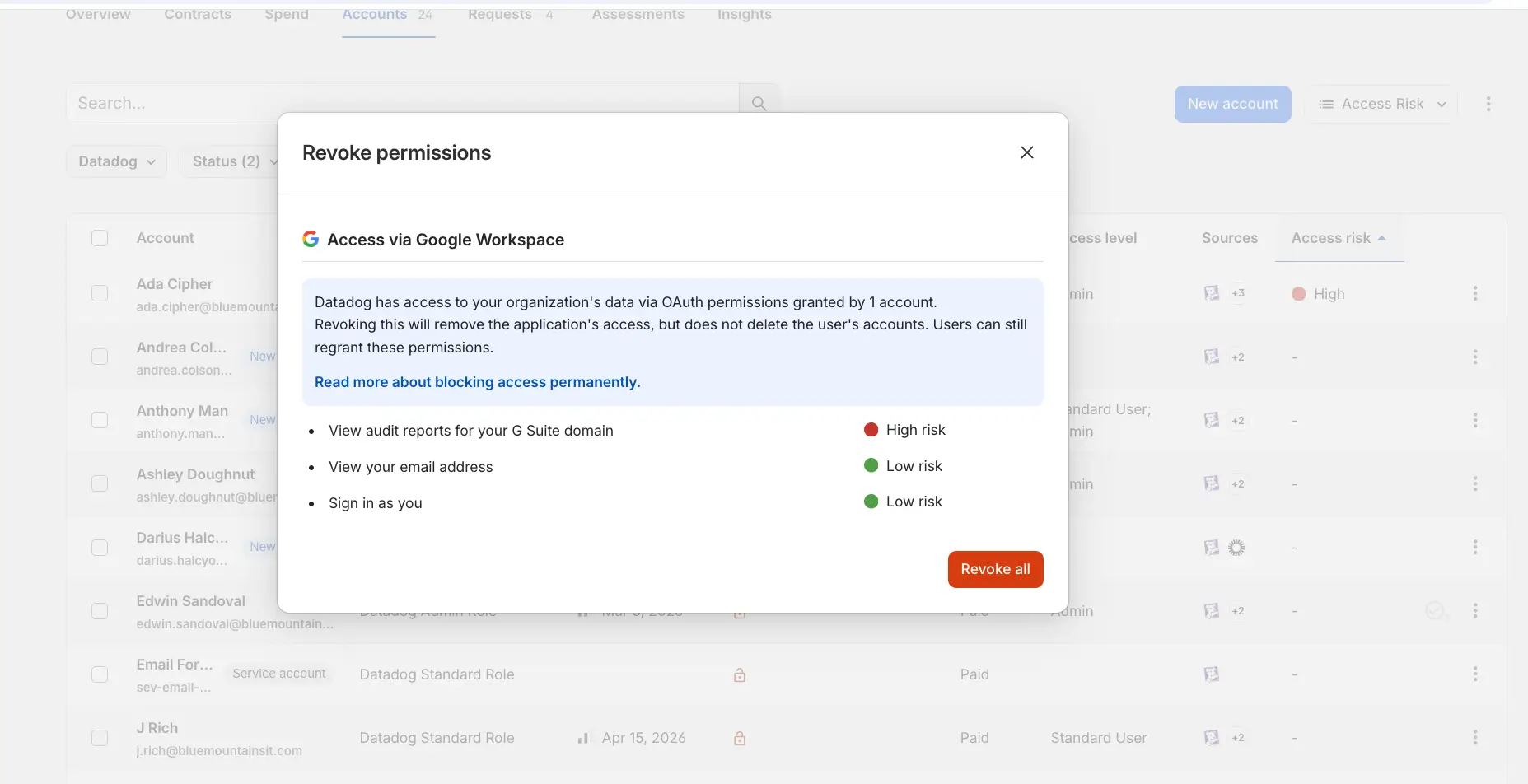

How 1Password SaaS Manager helps prevent OAuth supply chain attacks

OAuth-based supply chain attacks are a growing concern for today’s companies. These attacks tend to play out like so:

An employee connects a third-party tool using “sign in with Google” or “sign in with Microsoft.”

Permissions are granted, then forgotten.

That third-party tool gets compromised.

The attacker walks into your systems with a valid key.

In this scenario, the attacker is making authenticated requests within the approved permission scopes; there are no failed logins, anomalies, or privilege escalation attempts that can be detected by a team’s SIEM provider, CASB, or anomaly detection tools.

The key to managing this risk doesn’t lie in preventing every OAuth connection or blocking every third-party tool. Rather, as Sandhu put it during the webinar, “Prevention requires knowing which connections exist right now at this moment, and ensuring access is granted only when needed. This is exactly what 1Password SaaS Manager does.”

1Password SaaS Manager can help companies:

Discover risky OAuth connections: Teams can continuously surface Oauth connections and flag connections with elevated permission scopes.

Secure Access: Admins can revoke access with a single action, set policies to restrict OAuth, and reduce standing privilege exposure.

Audit Actions: Every access change is automatically logged, providing teams with defensible audit records for compliance standards like SOC 2, ISO 27001, and HIPAA.

These abilities mean that 1Password SaaS Manager is uniquely able to help teams manage risks related to OAuth supply chain attacks – and the other risks associated with a company’s unmanaged stack of shadow IT and AI.

New AI integrations to help you govern ChatGPT, Claude, Gemini, and Cursor

Unfortunately, even company-approved AI tools often can’t integrate cleanly or affordably with SSO.

As Sandhu stated, “Let’s say someone needs access to an AI platform. You create accounts for them, and it’s done one by one for every tool. You as an IT admin are constantly context switching between every different admin console to check who’s using what, how many tokens they’re spending, and how much usage they’re getting.”

This is why the webinar also highlighted five new AI integrations within 1Password SaaS Manager, including:

ChatGPT and openAI

Claude

Cursor

Google Gemini

These integrations are built with full lifecycle governance, including onboarding and offboarding workflows, in mind. As Sandhu put it, “You can assign roles, whether to individuals or groups. You can log specific metrics like usage and token spend. And you have full deprovisioning and provisioning capabilities as well. All of this is done in 1Password SaaS Manager.”

Ethan Stoler’s demo showed how simple it is to discover and manage these unapproved AI tools, including setting up workflows that let teams automate and customize their management processes.

What should teams do next?

To summarize the main points of the webinar:

1Password EPM and 1Password SaaS Manager have new integration capabilities that enable them to discover and govern high-risk logins.

1Password SaaS Manager is able to help companies surface, secure, and audit the unsanctioned AI tools and agents that can put companies at risk.

1Password SaaS Manager has new integrations with major AI companies that provide admins with a central tool for full lifecycle governance over their AI applications.

These integrations and capabilities are already available for teams that currently use 1Password EPM and 1Password SaaS Manager. To learn more and to see the demos in action, watch the complete webinar recording.

Want to get started with 1Password SaaS Manager? Reach out to our team.

2026-05-28, 00:00

At 1Password, our Jewish 'Bits Employee Community Group exists to create space for Jewish employees and curious allies alike to connect, learn, and show up authentically. For Jewish Heritage Month this May, we wanted to spotlight Nicole Smith, Staff Project Manager and lead of our Jewish 'Bits ECG.

In her four years at 1Password, Nicole has been someone people turn to when the work gets complicated, because she builds trust that makes honest conversations possible. That same instinct to lead with curiosity and create space for real connection shows up across everything she does, from leading complex, cross-functional work to fostering community that enriches how we all experience 1Password together.

We sat down with Nicole to talk about what drives her, the projects she's most proud of, and what she hopes her colleagues take a moment to appreciate in honor of Jewish Heritage Month this year.

As a Staff Project Manager for our CX organization, you're known for bringing clarity to complex, cross-functional work - helping teams stay aligned and move effectively so they can show up for customers. What is it about that kind of work that energizes you?

The people I get to work with every single day are what energize me! Cross-functional work involves navigating a lot of competing priorities and moving pieces, and that complexity can create a lot of noise. My job is to cut through it, not by having all the answers, but by building enough trust with the people around me that we can be honest about what's working and what isn't.

When people trust you, they tell you the truth, and when you have the truth, you can actually move forward together. When a working group aligns and shifts into driving forward together with purpose, it's powerful. When my colleagues feel supported in the work we’re doing, we show up better for each other, and that ultimately creates the best experience possible for our customers.

What's a moment or project at 1Password that you're especially proud of - something where you could really see the difference your work made for the team

Our migration from our previous Customer Relationship Management software to implementing a new tool for everyone at 1Password is a project I'm especially proud of. It involved over ten months of deeply cross-functional, complex work, and we went live in March 2026.

The thing I'm most proud of isn't the go-live itself, but how the team showed up together to get there. There were moments where things could have gone sideways, and what propelled us to the finish line together was trust. Our team was honest about known risks, they flagged problems early, they backed each other up, and that's what winning together looks like when the stakes are high. I know the work we did as a team made a difference for not only our Customer Experience folks, but the customers we interact with on a daily basis.

You also serve as a mentor in our CX mentorship program. What inspires you to invest in others' growth, and what do you hope the people you mentor feel when they're in your corner?

I've been extremely fortunate to have some incredible managers, mentors, and colleagues in my corner who saw something in me before I saw it in myself. Once you've been on the receiving end of that kind of investment, you feel empowered to pay it forward. When I'm mentoring, I try to be a good listener first, while also being honest. As a mentor, I'm going to tell you what I actually think and ask my mentee the hard questions. I’m going to show up for the moments that don't feel great or might feel like a challenge, because that makes the accomplishments and wins even better to celebrate. I want the people I mentor to leave our conversations trusting and feeling more confident in themselves because they know they have someone who is there to support them.

In addition to your work in CX, you lead our Jewish ‘Bits Employee Community Group. What does that leadership role involve, and how do you approach building a space that feels welcoming both to Jewish employees and to allies who want to learn?

At its core, our Jewish 'Bits Community Group is about connection. We create space for Jewish employees to share culture, identity, and history with one another. That sometimes looks like hosting an educational panel and conversation, a holiday gathering, or starting a Slack thread where someone shares something personal and meaningful.

We're equally committed to being a space where allies and curious colleagues feel genuinely welcome. Building that kind of trust where people can come with questions and without fear of getting it wrong is something I take seriously. Jewish identity is layered and rich and sometimes complicated, and my approach is to lead with honesty, make room for real conversations, and make it clear that everyone's curiosity is welcome here.

As a leader for the ECG, you've been involved in many meaningful moments - including the opportunity to moderate a conversation with Holocaust survivor Mariette Doduck. How has leading within this community deepened your connection to your Jewish heritage and identity?

In 2025, I moderated a conversation with Mariette Doduck, a Holocaust survivor who has spent decades sharing her testimony, and it was one of the most profound experiences of my professional life. Hearing her story and sitting in that very real, challenging conversation gave me a deep appreciation for the Jewish people who have come before me, and that stays with me. Leading Jewish 'Bits has made me more intentional about what I carry forward as a Jewish person, and about what it means to hold space for others to do the same. My connection to my heritage has deepened in ways I didn't expect, and there's something about being responsible for that space that shifts how you carry your own identity. Jewish Heritage Month honors history, culture, and the richness of Jewish identity. What does this month mean to you personally, and what would you want your colleagues at 1Password to take a moment to appreciate or learn about?

Jewish Heritage Month is a dedicated time to celebrate history, culture, and the richness of Jewish identity. Jewish heritage is at times complex and deeply personal to those who carry it, and this month is a reminder to appreciate the depth of what it holds.

My invitation to colleagues is to get curious, to ask a question, start a conversation, and lean into what they don't yet know. Jewish identity is not a monolith, it's a tapestry of cultures, histories, languages, and lived experiences, and there is so much to discover. Engage with the community and conversations that Jewish 'Bits created this month, and in the conversations they'll create throughout the rest of the year, and you might be surprised by what resonates, and by what you didn't already know.

– Through her leadership, Nicole demonstrates that fostering genuine trust and leading with honesty are the keys to accomplishing impactful work - from aligning complex working groups and empowering mentees, to cultivating a truly authentic community space.

As we celebrate Jewish Heritage Month, we encourage you to embrace Nicole’s call to action: lead with curiosity, ask questions, and lean into the unfamiliar. Jewish heritage remains a rich collection of diverse cultures and shared histories, and there is no better moment than now to explore it.

Want to learn more about life at 1Password and our people and culture? Explore our careers page. https://1password.com/careers

2026-05-20, 00:00

There’s a question we get asked constantly, and it’s the right one to ask: “Can 1Password see the contents of my vault?”

The answer is no, and it’s because of how we built the product, not just a promise we’re making. That’s an important distinction, because “we promise” has never been an acceptable answer in this industry. After all, promises get broken, and companies get compromised, acquired, and are under constant attack from threat actors.

1Password’s commitment to our security principles is genuine, but what matters more is how we’ve built that commitment into our product and architecture, and the transparency we back it up with with our security white paper.

So here’s the precise answer: The way 1Password is built means that we are incapable, on a technical level, of decrypting and reading your vault contents. We're not policy-prevented or contractually restricted; we are technically incapable. This post explains what that means, why we built it this way, and what the real tradeoffs are.

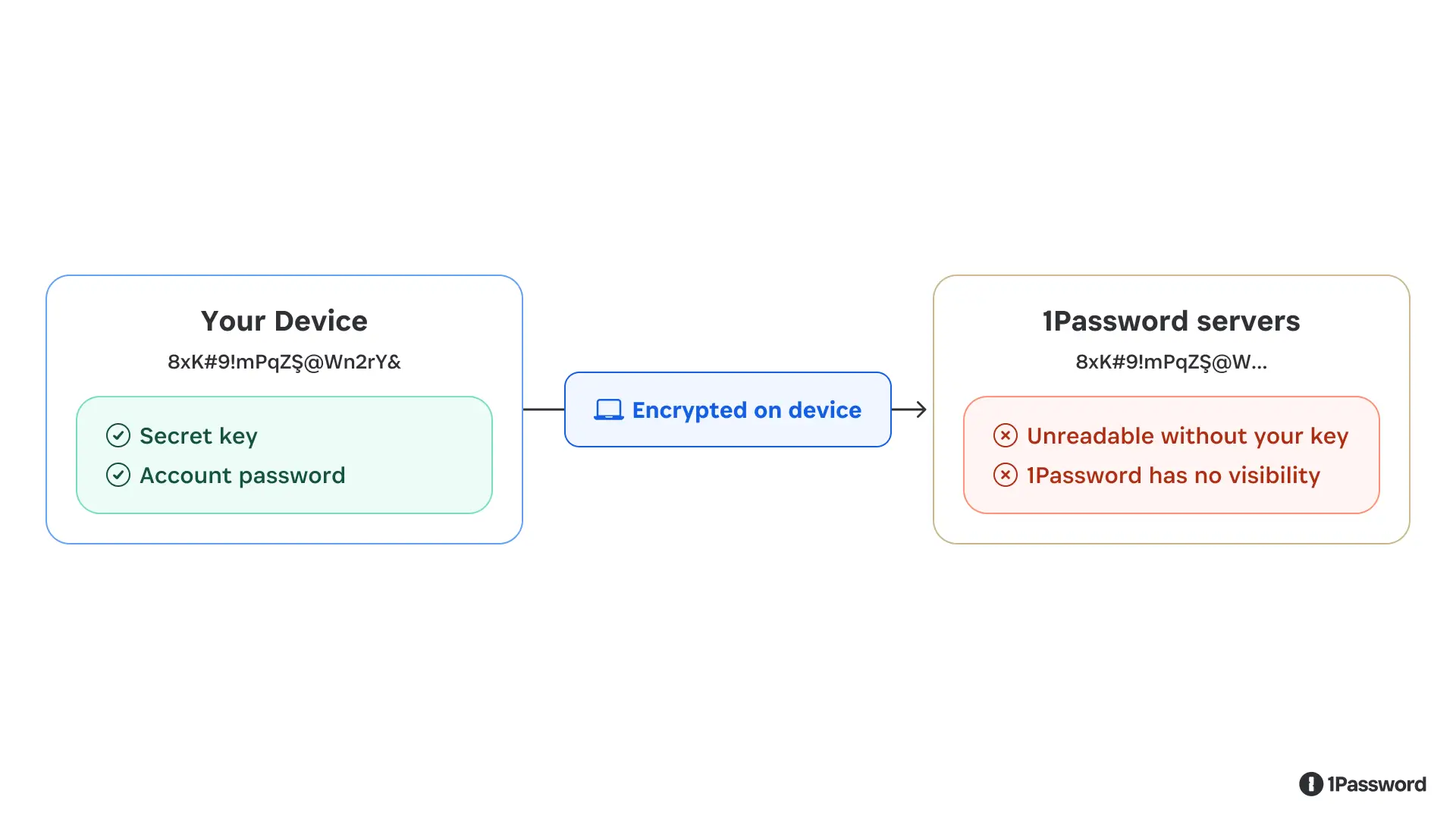

Your data is encrypted before it ever leaves your device

When you save a password, a credit card number, or a note in 1Password, the first thing that happens is encryption, and it happens on your device, before any data moves anywhere.

Encryption here doesn’t mean we “hide” or “scramble” your data and promise not to look. It means your plaintext vault item is transformed into ciphertext using cryptographic keys that are only available on your devices. Without these keys, 1Password is unable to decrypt and read your data.

The two keys in question are your 128 bit Secret Key (a 34-character value separated by dashes) and your account password. Together, these produce the cryptographic key that locks and unlocks your vault.

Here’s the critical part: neither your Secret Key nor your account password is ever transmitted to 1Password or stored on our servers. We never possess the keys needed to decrypt your vaults. When you set up your 1Password account on a new device, you’re not “downloading” your key from us, you’re entering it yourself (either manually or using a QR code), and your device uses it locally to decrypt the vault data it receives.

What we store on our servers is the encrypted version of your vault contents: ciphertext that is, for all practical purposes, indistinguishable from random data without the key to decrypt it. If our servers were compromised tomorrow and an attacker exfiltrated every byte of stored data, they’d only have encrypted blobs they cannot read.

Your vault content are encrypted on your device. What reaches our servers is unreadable ciphertext.

Even the fastest supercomputer would take (literally) billions of billions of years to try and guess a 128-bit encryption key. That’s what we mean when we say 1Password’s security isn’t built on promises; it’s built on math.

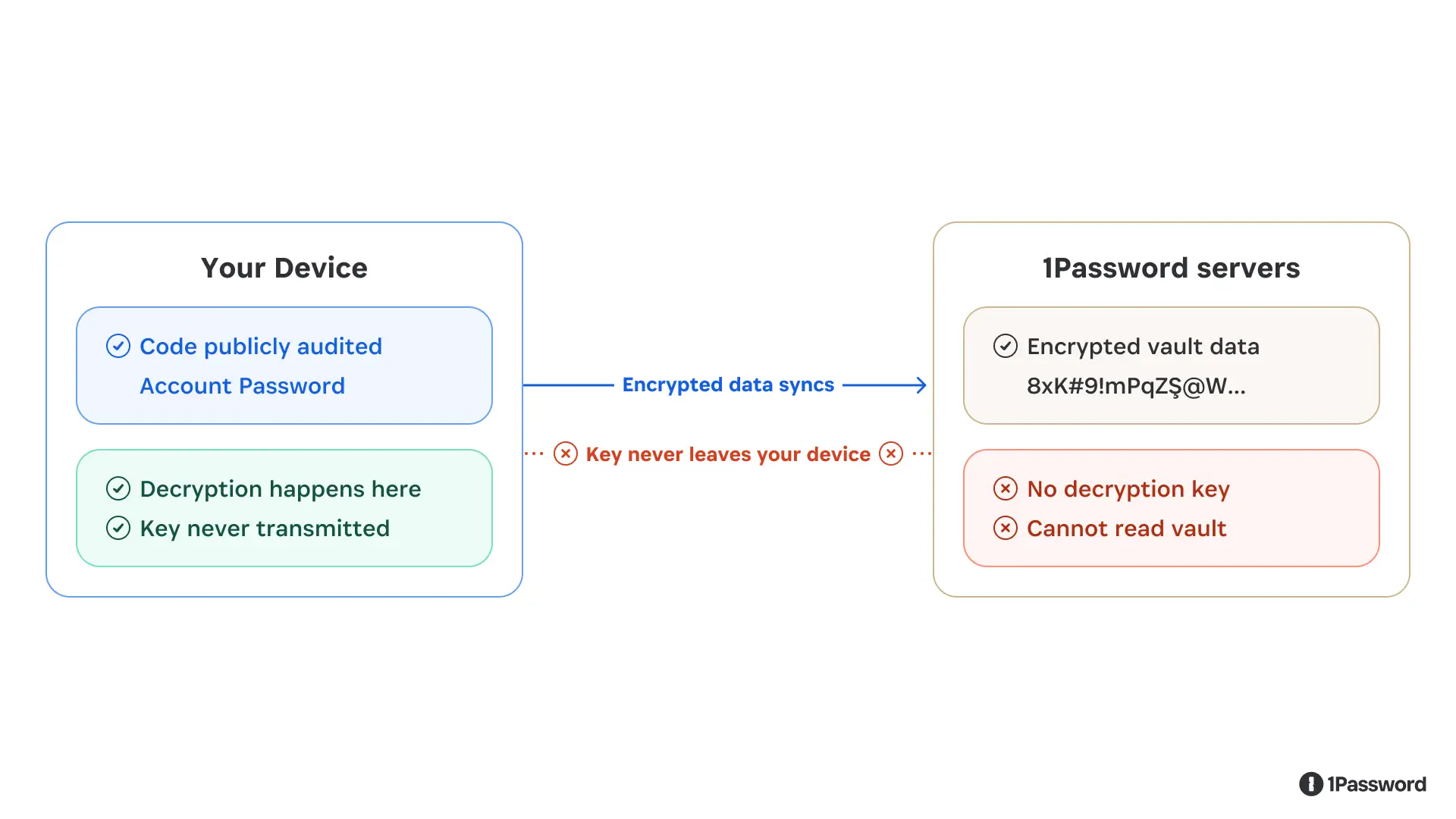

What “zero-knowledge” actually means and what it costs

The design pattern described above is called zero-knowledge architecture. It means the service provider, in this case, 1Password, has zero knowledge of the plaintext contents of what it’s storing.

Zero-knowledge is a meaningful claim because it is an immutable fact of our architecture. But zero-knowledge is a security guarantee with real product implications and intentional constraints.

The most significant tradeoff is account recovery. If you forget your account password or lose your Secret Key, we cannot return them to you, because we don’t have them. (If you forget your password, you can regain access to your account by generating a recovery code, but this still requires you to have access to the email account you used to create the account.)

The same constraint shapes what features we can build. Any capability that would require 1Password to see your plaintext data is, by design, off the table. We can’t offer server-side search across your vault contents. When we scan your saved passwords and tell you which ones have appeared in a breach, we do that computation on your device and only a partial hash of your password is checked against breach databases, so we learn nothing about the actual credential. Some things that would be convenient to build are simply incompatible with the architecture, and we think that’s the correct tradeoff.

The decryption key lives on your device. Encrypted data syncs to our servers, but the key never does.

Zero-knowledge also means we can’t be compelled to hand over vault contents we don’t have. A court order can require us to produce data, but it can’t require us to produce a decryption key that doesn’t exist on our systems. (You can read our full policy on legal requests here.)

When computation has to happen in the cloud

The zero-knowledge constraint of only processing unencrypted data on a user's device works well for storing and syncing data. But some features, particularly enterprise capabilities like company-wide security reporting, require server-side computation. So our question in building those features has been: how can we do this without undermining our architecture and creating a new exposure point?

This creates a real problem. If you need to process data in the cloud, and that data needs to be in a usable form during processing, how do you prevent the cloud infrastructure from being a point of exposure? The standard answer in most other software is to trust the server, use access controls, audit the logs, and hope the infrastructure isn’t compromised.

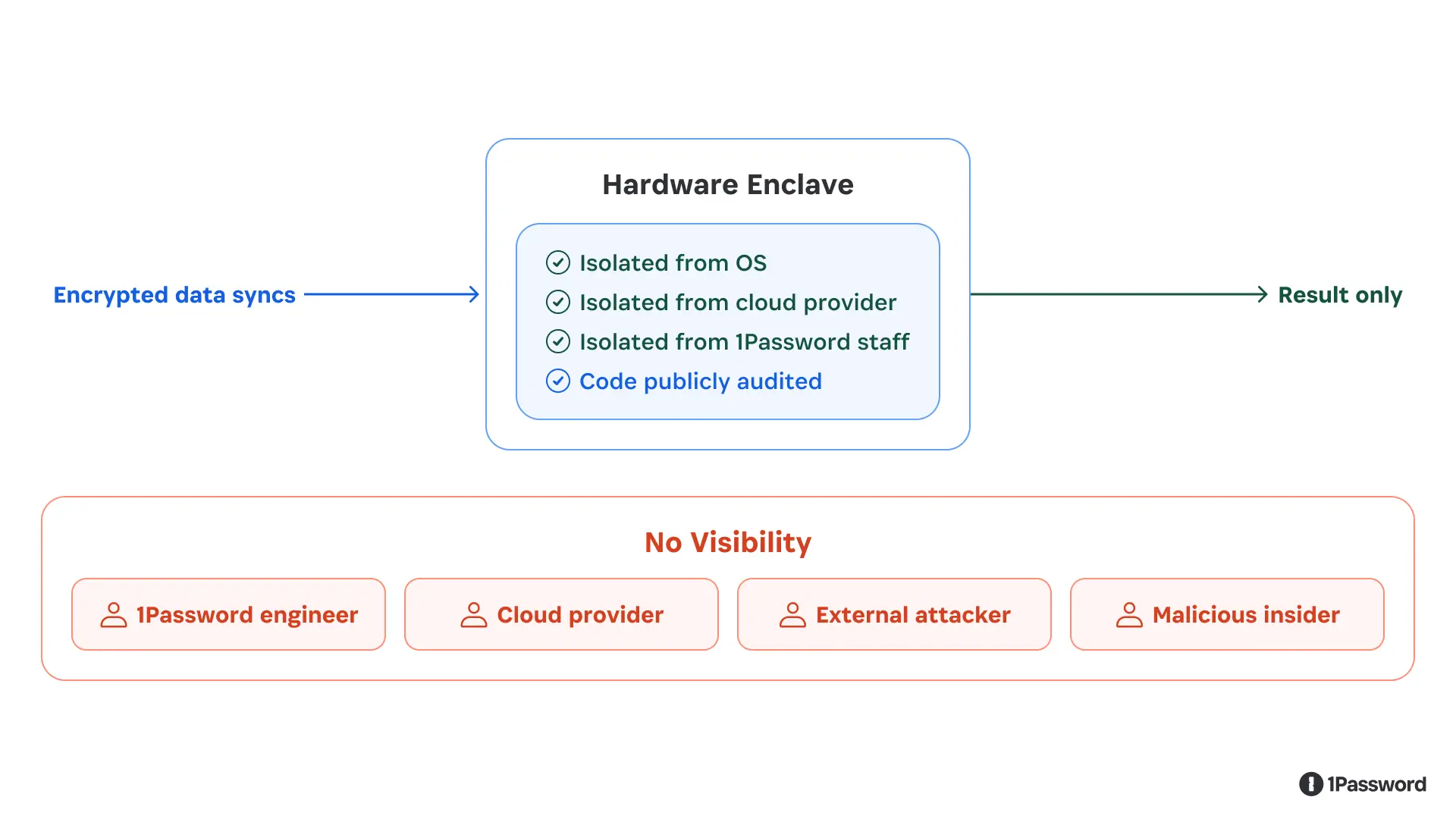

We weren’t satisfied with that. So we built cloud processing on top of a technology called confidential computing.

The core idea: instead of processing data on a regular server, we run computation inside a hardware-enforced enclave. Think of it as a sealed processing room: data goes in, results come out, and the room is impenetrable at the hardware level.

The enclave combines hardware-backed isolation, verified code execution, and cryptographic attestation – protocols designed to minimize what services can learn. Not even the cloud provider running the hardware can observe what’s happening.

The enclave is a hardware-enforced sealed room. Data is processed inside; nobody — including 1Password — can reach in.

We also publish the code that runs inside these enclaves, and we use cryptographic attestation so that you can independently verify it’s running the code we published and not some modified version. An independent security firm audited the implementation and found no critical vulnerabilities. The full report is publicly available, the code is available, and the verification mechanism is built into the protocol.

Why this design matters more now

Password managers contain sensitive and valuable secrets for individuals, families, and companies alike, so they are often subjected to attacks by bad actors. That has been true for years, and it’s only becoming more true as technology evolves.

Password managers are increasingly the credential layer for a broader set of tools: browsers, developer environments, workplace automation, and now AI-powered agents that can take actions on your behalf. We’ve written about how we approach agent identity and the trust decisions that come with it. As those connections multiply, the question becomes: how do we allow the right tools to access the right data at the right time, without expanding trust more than necessary?

The right answer is to stick to proven security principles: zero trust, zero-knowledge, and cryptographic designs published and reviewed by our customers and the community.

Want to learn more?

If you’re a customer, this is how your data is protected. If you’re evaluating password managers, these are the questions worth asking: Where does encryption happen? Who holds the keys? What can the service provider see, and what can they be compelled to produce?

If you want to go deeper, our cryptography white paper walks through the technical implementation in full detail. Our confidential computing blog post covers the enclave architecture specifically.

Even as 1Password and the digital world evolve, we will continue to insist that security should be verifiable, not just claimed. Everything we build maintains that standard.

2026-05-20, 00:00

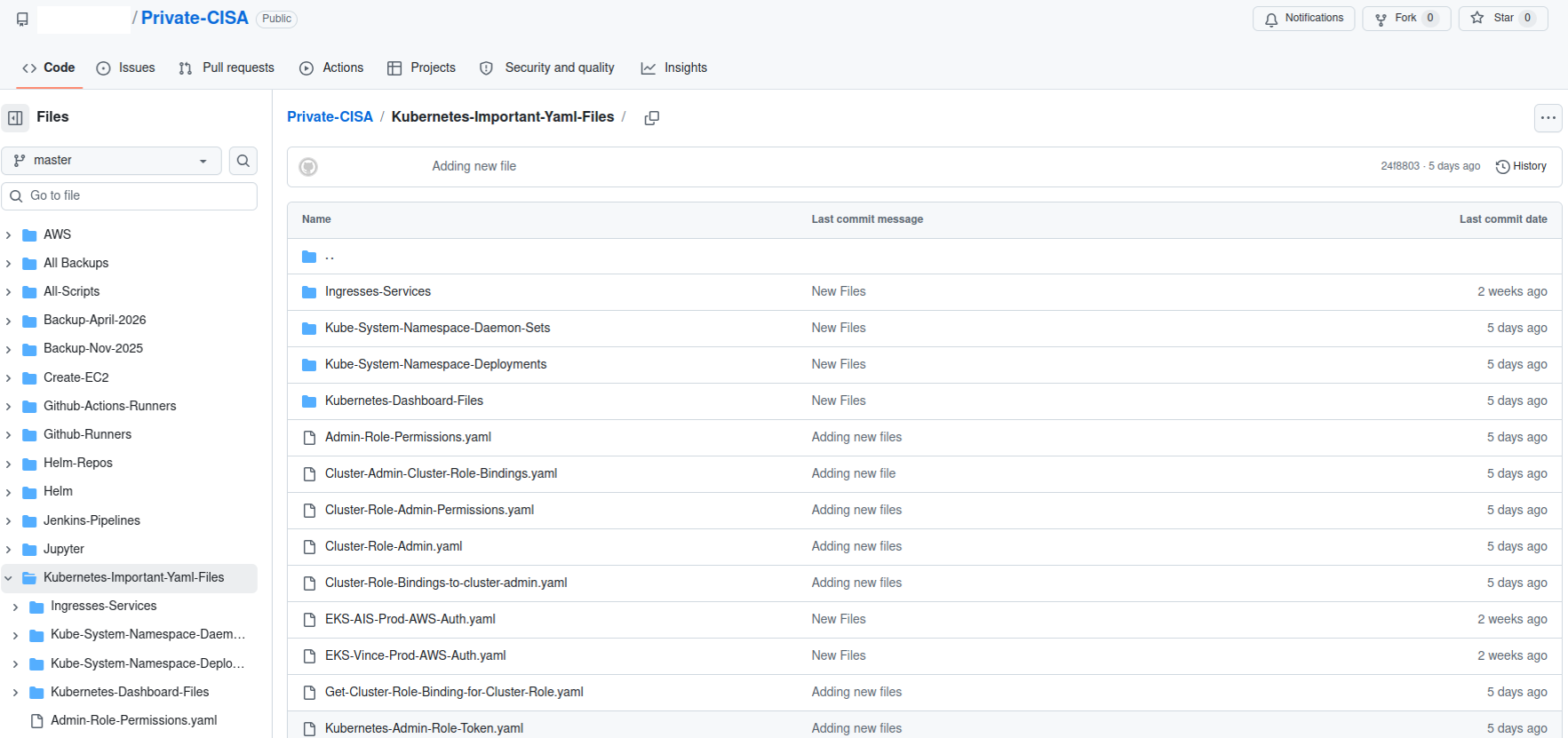

Coding agents like Codex are helping developers write, execute, and prepare code for production. Every action that AI coding agents take against a database, an API, or a deployment pipeline requires access to credentials. Today, these credentials typically live in .env files, scripts, or hardcoded in repositories, where they can be easily exfiltrated and are difficult to govern and audit. The shift from AI assistance to AI execution has outpaced how teams manage the secrets needed for execution.

1Password and OpenAI are working together to close this gap. The 1Password Environments MCP Server for Codex makes 1Password the trusted access layer for Codex: credentials are issued just-in-time and scoped to the task, while keeping them outside the model’s context window. Developers get the access they need to build and ship, while secrets stay where they belong. The same integration helps catch secrets at the source. Codex can be prompted to use 1Password and the 1Password MCP to store and use credentials that it needs.

Why secrets should stay out of prompts, code, and model context

Every credential placed inside an agent's context is a credential at risk of easily being exfiltrated. It can be logged, cached, reused across sessions, or surfaced in unexpected outputs. A secure architecture treats a coding agent as a tenant, not a vault: it gets secure access to do its job, but never custody of the secret itself. 1Password Environments is built on that principle. Instead of sharing .env files or hardcoding credential values, teams work from a shared environment where secrets are made available at runtime to the application, without the values ever appearing in code, terminals, or model context.

This secure access model is built on the same vault technology and security architecture used across 1Password. Secrets remain end-to-end encrypted and centrally managed, with access limited to authorized users and groups, and through custom permissions.

This architecture matters more as coding agents take on a bigger share of the development workflow. Any agent that executes code needs credentials, and any credential copied into local files or prompts, or hardcoded into repositories is a credential at risk. 1Password Environments gives teams a way to support these workflows without trading security for developer velocity.

Connecting 1Password Environments to Codex

The integration uses a local MCP server – packaged inside our Password Manager and developer tools – to connect Codex and 1Password Environments, and is available to both 1Password business and personal accounts. MCP connects models to tools and context, specifically with 1Password’s MCP Server for Codex, developers can grant Codex access to credentials directly inside their coding workflows while keeping secrets outside of code. That last part is key: the MCP server here is designed so that Codex can act on secrets without ever seeing them.

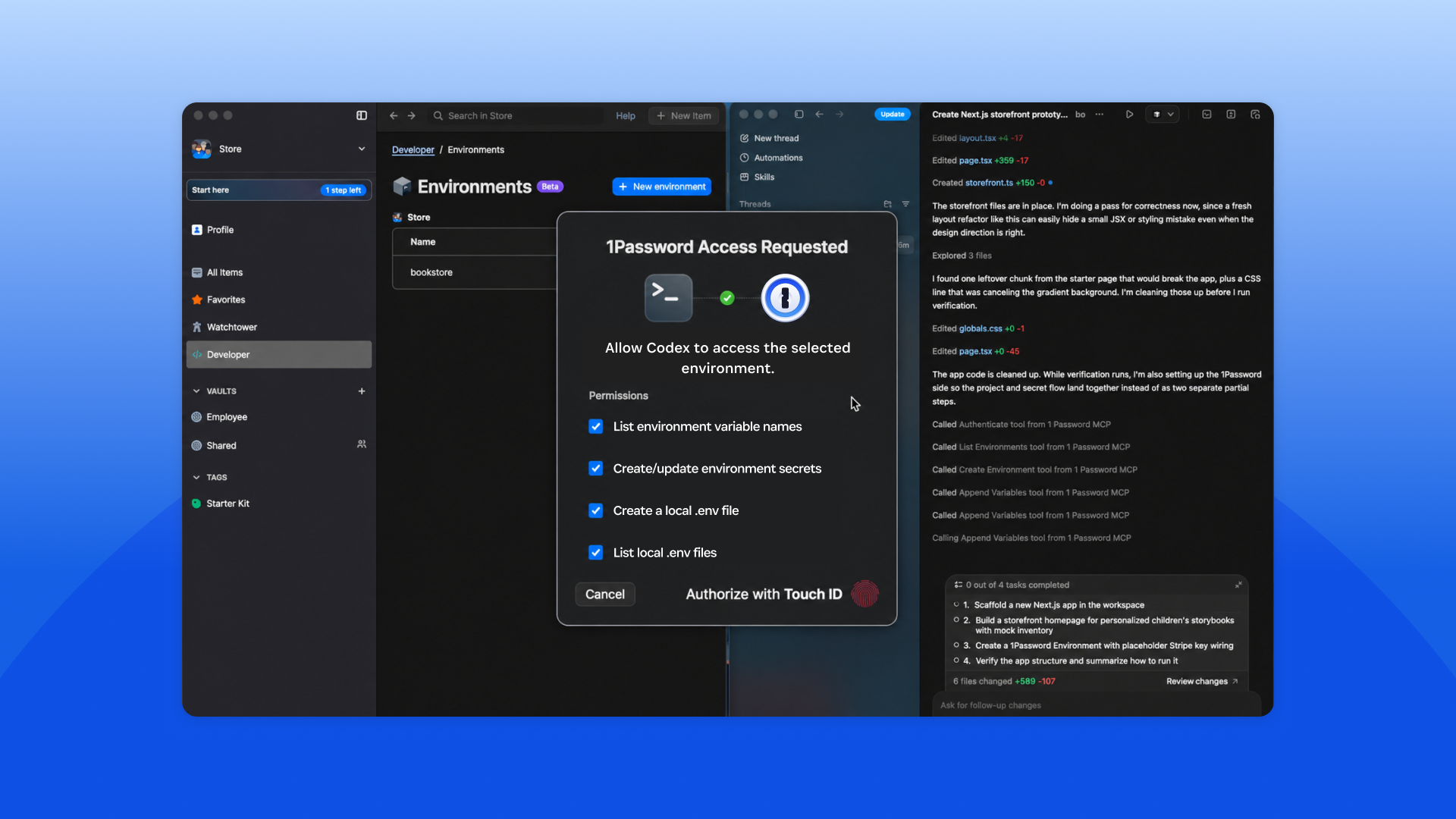

Here's what happens when a developer or builder asks Codex to configure an environment:

Start a task in Codex: For example, ask Codex to create an app and configure the environment it needs.

Codex connects to the 1Password MCP server: This happens over a local MCP server connection, where Codex can discover and invoke available actions from instructions the MCP is providing.

Requests are validated through 1Password: The MCP server communicates with the 1Password desktop app, which handles identity, authorization, and secure access.

A user always needs to approve access: Every interaction requires explicit 1Password user auth prompt approval before Codex can proceed.

Codex creates and manages an environment: It can create environments, list and manage variable names, and prepare configuration without accessing raw secrets.

Secrets are used at runtime: Applications run using secrets from 1Password, without copying credentials into prompts, local files, or repositories.

It’s important to note the architectural guarantee: secrets never leave 1Password and are always secure. The MCP server does not read or return secret values through the MCP channel, surface secrets in the model’s context window, or write them to disk. Codex can create environments, list variable names, and invoke applications that use those secrets, but the values themselves never leave 1Password.

Here’s what actually happens at runtime: 1Password injects the required variables directly into the application process when it runs. The values exist in memory only for the authorized process, and only for as long as the process needs them. Codex orchestrates, the application executes, and 1Password issues the credentials.

This integration reflects 1Password’s approach to MCP and agentic workflows. Secrets are securely injected at runtime for an authorized process and users must explicitly authorize access for the scoped task. MCP works best when access is scoped, user-approved, and keeps credentials out of the agent context.

What builders can do with Codex and 1Password Environments

If you’re a developer or builder, this integration is designed to fit into how you already work, while reducing the need to handle secrets directly or copy them into prompts, local files, or repositories. With this integration, developers can:

Bootstrap new projects with 1Password-managed environments so you don't have to create or share .env files.

Allow Codex to create and manage environments so your code runs with the right configuration, while underlying secrets stay in 1Password.

Stay in control of every access since each Codex interaction with 1Password requires explicit user approval.

Use Codex to scan repositories for secrets in plain text, then move these secrets into 1Password for secure storage, and replace them with references in code.

Use Codex to extend environments across stages. Use your local environment as a baseline to help bootstrap staging and production environments.

What this unlocks for engineering and security teams

This integration reduces the overhead of managing secrets in AI-driven workflows, while giving teams more control over how those workflows are adopted.

With this integration, teams can:

Eliminate manual secret cleanup and the context switching it requires.

Move existing secrets into secure storage as part of the normal coding workflow, not as a separate hygiene task.

Support Codex adoption while keeping credentials outside the model’s context window.

Give developers a fast path to AI-assisted workflows while security teams retain oversight of how secrets are accessed.

Centralize secrets in 1Password instead of letting them scatter across repositories, files, and local environments.

Get started with 1Password Environments and Codex

We're launching the 1Password Environments MCP Server with Codex as a proof point for a broader thesis about the future of agent access.

Coding agents are the leading edge of a larger shift: AI agents joining the workforce and needing real access to real systems. Every one of them will need credentials, but none of them should have custody of those credentials. 1Password is building the access architecture for a future where every agent: coding, operational, and customer-facing gets access through the same trusted layer. Codex is where that future starts.

How to turn it on

This new feature is available to all joint 1Password and OpenAI customers with access to our Password Managers and 1Password developer tools.

To get started, visit the 1Password Marketplace listing for step-by-step documentation on connecting Codex to 1Password using the local MCP server.

2026-05-19, 00:00

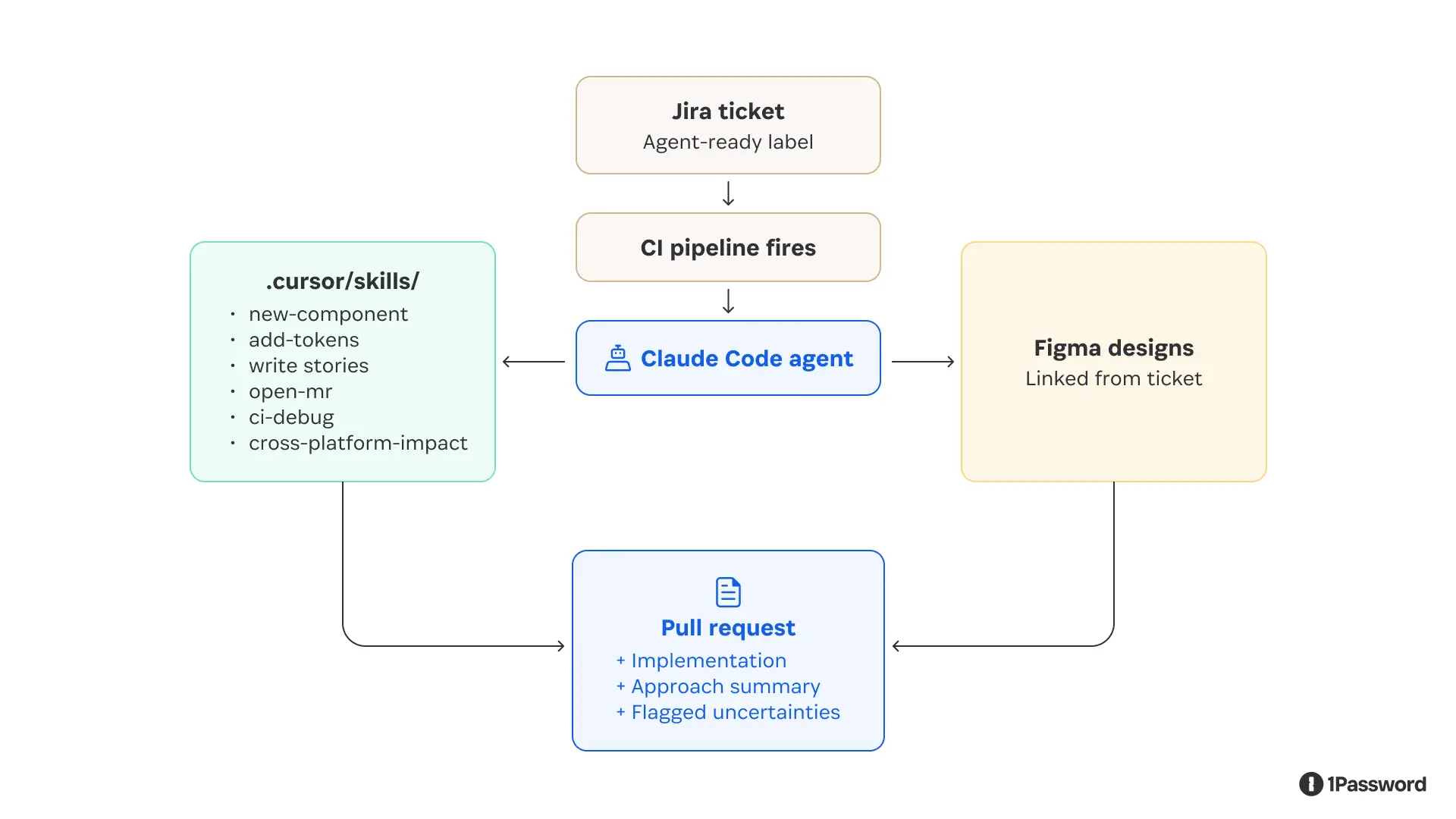

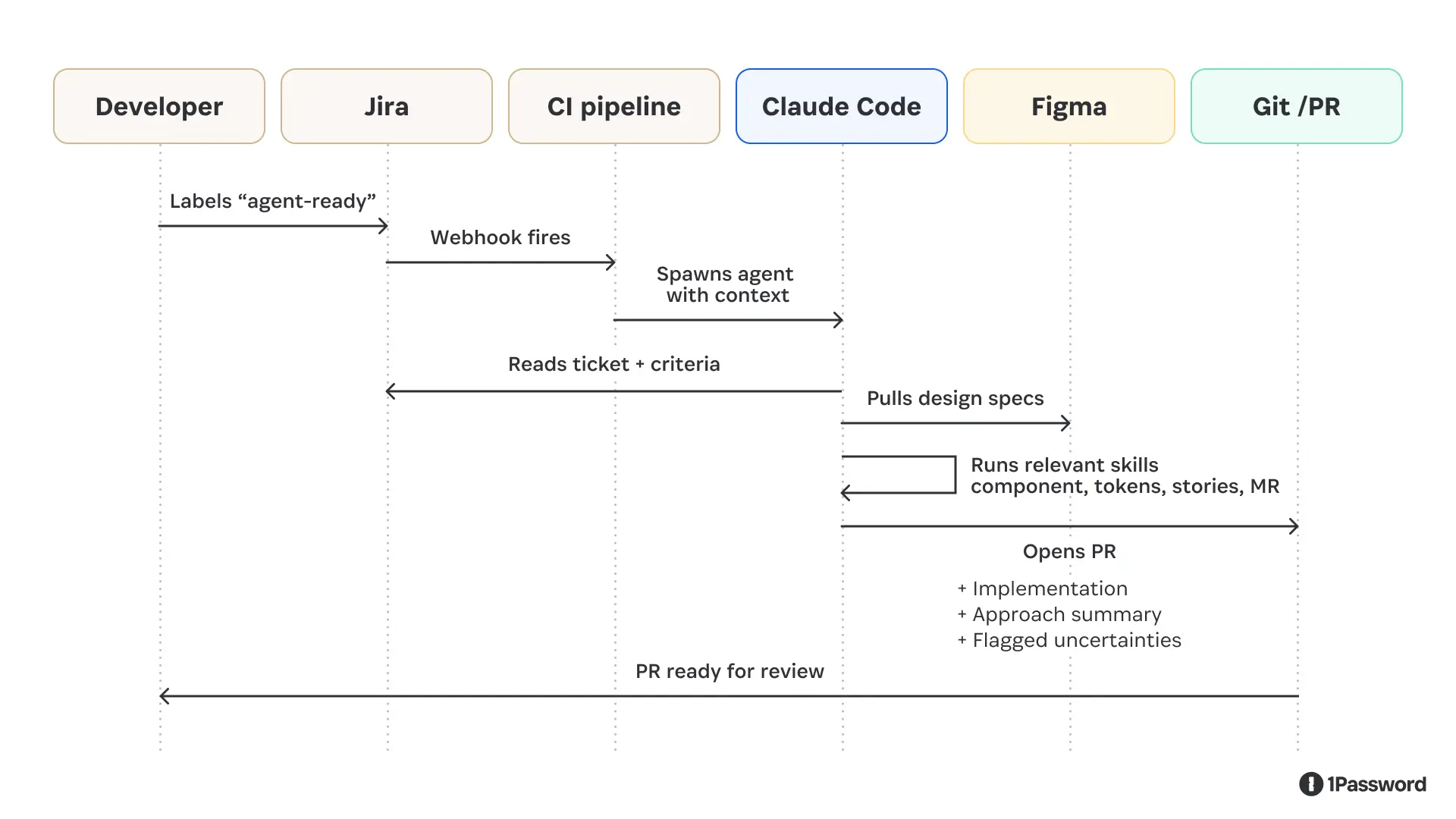

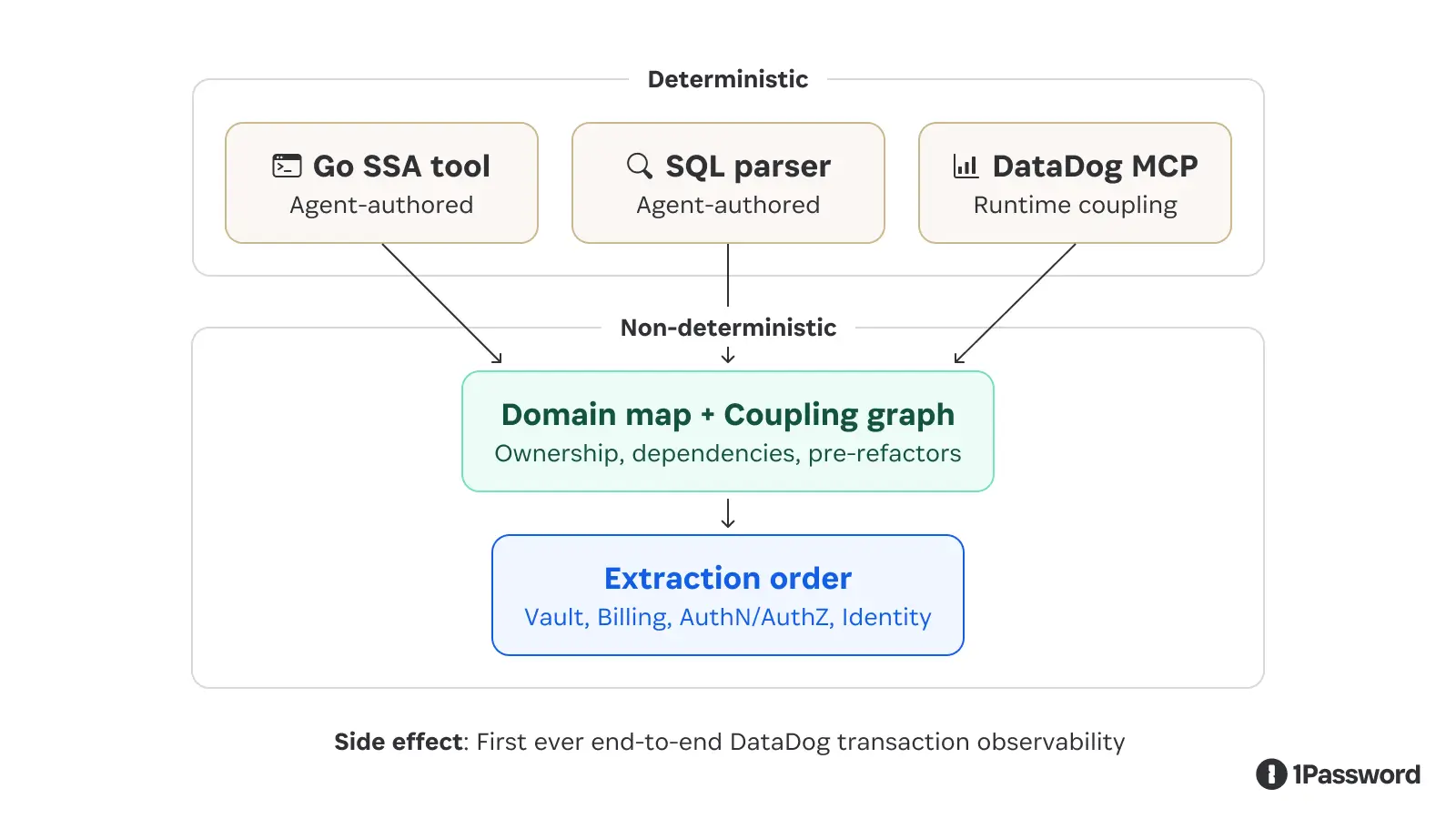

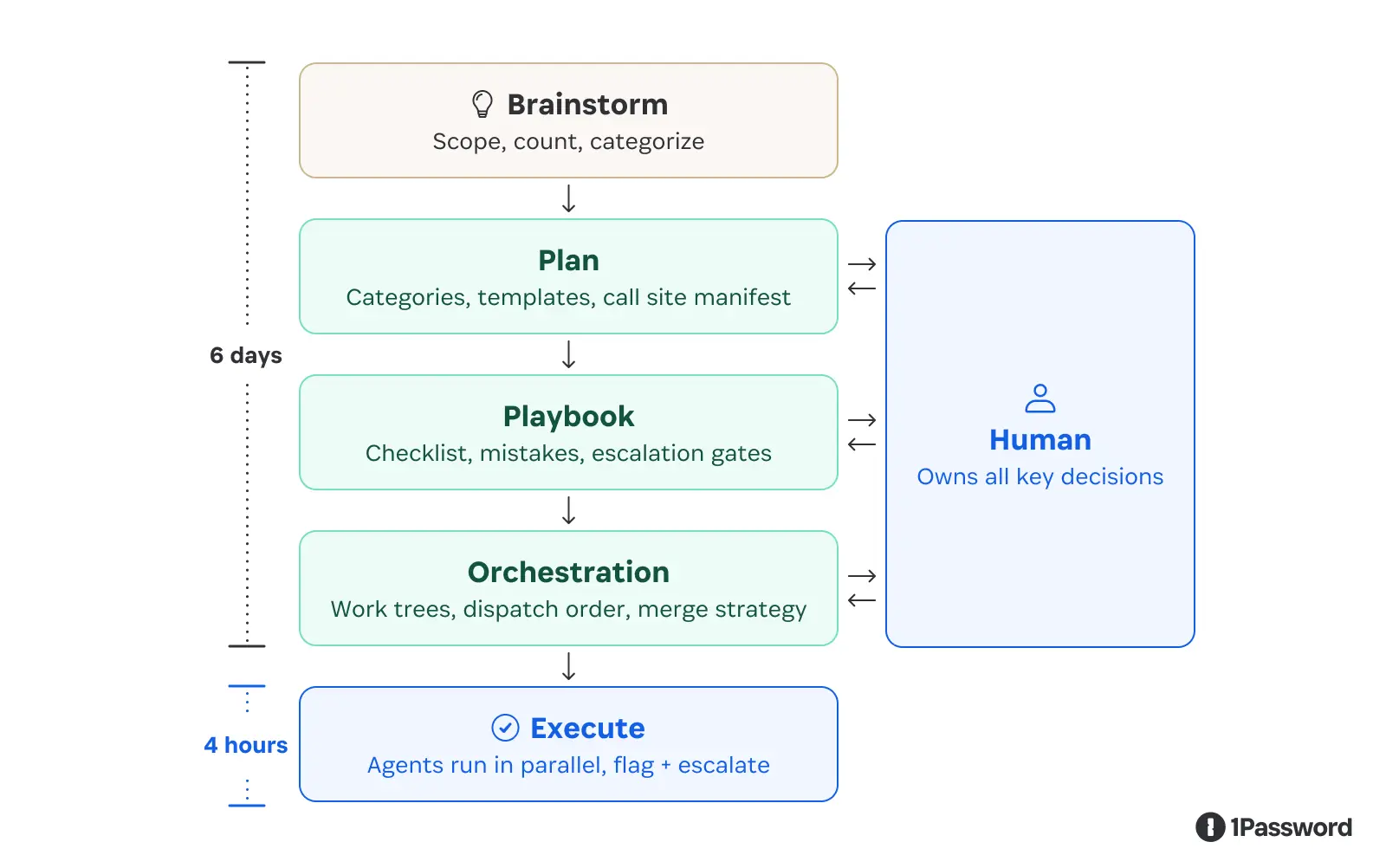

Design system work follows a well-defined loop: read the ticket, check the Figma spec, find the right component primitives, apply the right tokens, write the Storybook stories, run the tests, open the PR. The steps are consistent enough that when we looked at our design system backlog, we didn't just see a list of tasks; we saw a set of instructions waiting to be executed.

So we set an agent loose on the loop. At first, it was a semi-hot mess. But then we gave it the right context, and boom, it has completely changed how we improve our Design System.

Here’s our approach on what we did and what we learned.

Why we started with our design system

Every team considering agentic coding faces the same question of where to begin. The tempting answer is your largest codebase or your most complex feature. The right answer is wherever the work is most well-specified, and the feedback loop is fastest.

Our React component library, the web layer of our design system, happened to be both. Conventions are strict by design: that's the whole point of having a design system. The output shape is predictable and well-documented: a component, some design tokens, a story, and a test. The blast radius of any change is traceable. And if a token is wrong, the tests catch it automatically, without a human having to notice.

That combination of explicit conventions, predictable outputs, and automatic validation describes exactly the kind of bounded context where agents do well. When we looked at where to prove the pattern before adapting it to larger, messier codebases, the design system was an obvious answer.

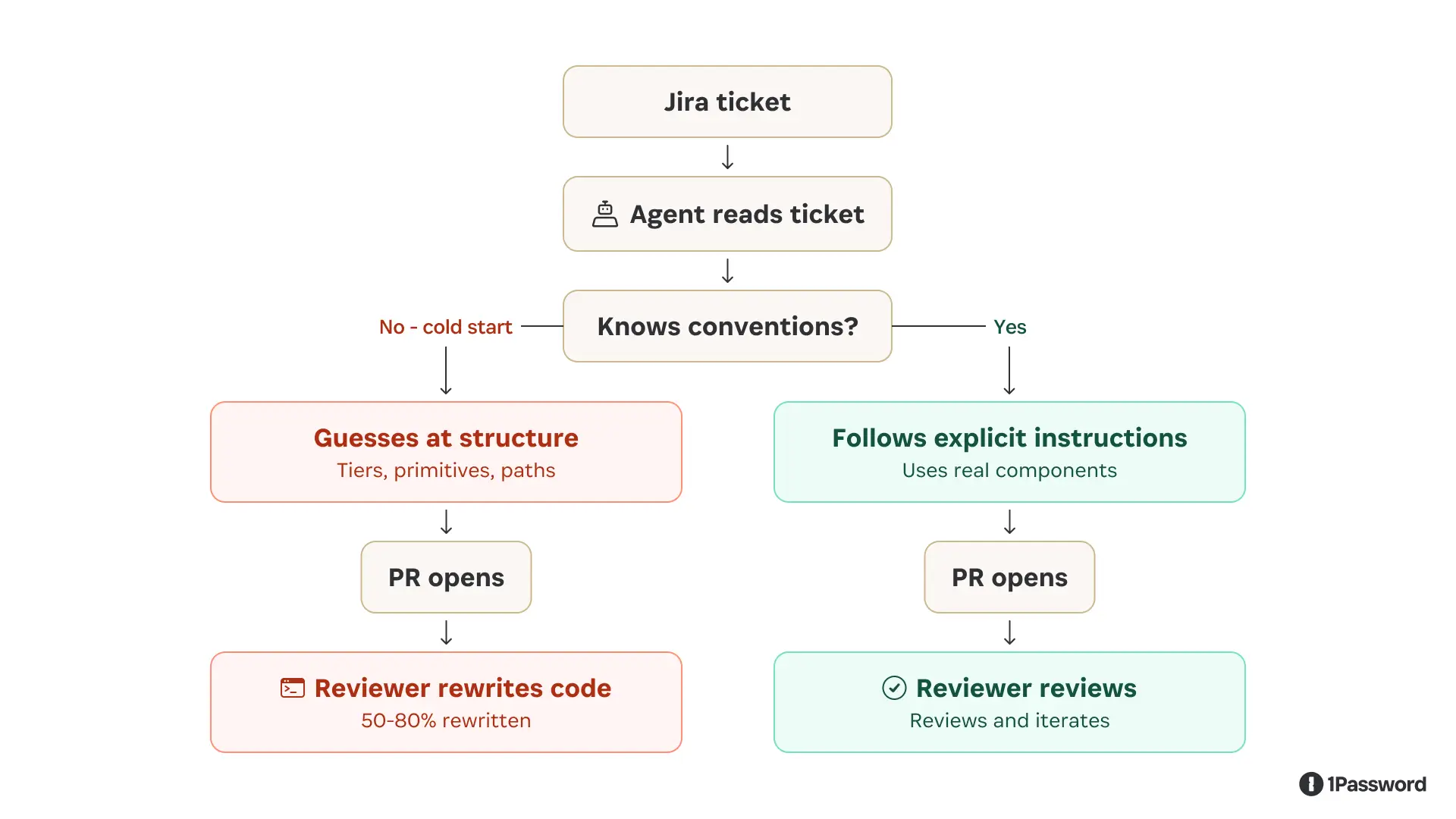

What happened when we pointed a general-purpose agent at our design system

The first attempt was to take a well-scoped ticket, hand it to a capable coding agent, and see what comes out.

The results were instructive, and not in the way we hoped.

The agent could read the ticket and navigate the codebase. But without design system-specific context, it filled knowledge gaps with confident-sounding guesses.

It placed tokens at the wrong tier in the hierarchy. Reached for raw HTML elements instead of the correct component primitives. The agent often chose components that looked right in isolation but were semantically wrong for the system, the kind of inconsistency a developer would catch immediately because it breaks patterns that only make sense in the context of the product as a whole.

It opened PRs that didn't follow the team's merge template; the code was often compiled, and tests even passed, but the output wasn't idiomatic. It was close enough to look right yet different enough that a reviewer had to do substantial correction work before anything could merge.

We hadn't saved developer time by making it easier to open a PR; instead, we'd moved the work downstream.

Without institutional knowledge, the agent’s work was insufficient. It knew how to write React, but it didn't know how our design system writes React: the specific directory structure, the token tier model, the CI conventions, and the component primitives we use instead of raw elements. That knowledge lives in the heads of everyone who works on the system, not in any file the agent could easily read.

The fix: making tacit knowledge explicit with agent skills

The solution was to stop expecting the agent to infer what experienced contributors know implicitly and start encoding that knowledge as explicit, executable instructions.

We wrote a set of skills covering the core design system contributor workflows that included

Scaffolding a new component

Defining tokens

Writing Storybook stories

Adding icons across platforms

Opening a merge request

Debugging a CI failure

Tracing cross-platform impact from a token change

Each skill provides the agent with exact file paths, naming conventions, import patterns, and build commands to make them executable by our agent.

We also exposed Knox through MCP for consumer-facing workflows where agents don’t necessarily have the Knox repo available but still need authoritative guidance on components, design tokens, and interaction patterns. This gave agents a way to ask the design system what exists, how to use it, and which patterns are appropriate without relying on guesswork or outdated copied context.

We folded in our existing builder-facing documentation, including real examples from the product, so the agent could anchor its decisions in consistency. Instead of the agent inferring what's in the system by reading source files, it can ask our design system directly. Our MCP server also added documentation on the user’s intent and the problem a specific component would solve. It enabled the agent to not only make it visually correct but also function as the user would expect in the product UI.

Right away, the agent’s output improved. It stopped guessing conventions because the repeated contributor workflows were now explicit. It had focused skills, clear commands, and a human-qualified ticket to work from.

How to do this for your own design system

This approach generalizes the specific tooling we used, a custom MCP server, CI-triggered runs, and skills committed to the repo can be adapted to any design system with enough test coverage and explicit conventions.

1. Pick the right starting scope

Don't start with your most common ticket type; start with the one you specify most often.

Good candidates:

Adding a component variant

Defining a new token tier

Updating an icon pipeline

Poor candidates:

Broad refactors

Anything that touches cross-team contracts

Work that requires design judgment

Tickets that the system doesn’t capture

A safe guide is that if a new contributor couldn't implement the ticket from the description alone, the agent can't either. The agent's output ceiling is the quality of its input.

2. Write skills, not documentation

Most design systems have documentation that defines what things are, but few have executable instructions written as skills, which tell an agent what to do, in what order, with exact commands.

Write a skill for each atomic workflow your contributors repeat. Keep them narrow; a skill that does one thing well is easier to maintain and easier for the agent to execute correctly than one that covers every case. Commit them to the repo alongside the code they describe, and when a convention changes, update the skill.

3. Match the context layer to the workflow

Agents working inside a well-structured repo can often read source files effectively when they have narrow skills that tell them where to look, what conventions to follow, and which commands to run. For the Jira-to-PR pipeline, the foundation was repo access, explicit skills, and CI review.

Not every agent workflow starts with a full design system repo available. Consumer-facing agents, prototyping tools, and downstream product workflows may still need authoritative guidance.

If your tooling supports MCP, a lightweight MCP server wrapping your component API, token registry, or Figma library data is the right answer. The agent queries it at runtime instead of guessing.

If a full MCP server is out of scope, a well-maintained DESIGN_SYSTEM.md context file that the agent loads at session start accomplishes most of the same goal at lower fidelity and is still significantly better than nothing.

4. Wire the trigger to a human qualification step

The best trigger we found was a ticket label.

A developer reviews the ticket, decides it's well-scoped, applies a label, and the pipeline fires. This keeps a human in the qualification loop while automating everything downstream.

5. Make the agent surface uncertainty

The PR description should explicitly name the decisions the agent wasn't confident about. A reviewer who knows exactly where to look can validate a draft in minutes, but a reviewer hunting for hidden assumptions will spend hours.

We asked the agent to flag uncertainties. For example, a PR that says "I wasn't sure whether this token belongs at the alias or component tier; I chose alias, but please verify" is far more useful than one that looks confident and buries the guess.

6. Measure PR quality before speed

Resist the temptation to lead with velocity metrics. The number that tells you whether the system is actually working is pull request quality.

Start with what percentage of agent PRs need only review and minor tweaks versus a substantial rewrite. A high rewrite rate means you've shifted work downstream, not eliminated it.

Component accuracy is a useful proxy. Does the agent reach for your actual design system primitives, or does it fall back to raw elements when it doesn't know what to use? If it's reaching for raw elements, your MCP context layer isn't working.

What the ticket-to-PR pipeline produces

In our workflow, a developer labels a ticket as ready. Then a few minutes later, a PR opens with idiomatic code, an approach summary, and explicit notes on where the agent was uncertain.

With this context, the reviewer's job becomes iteration, not inception. They're looking at a working draft with known uncertainties called out up front, not a blank editor.

The quality gap between "agent with skills and real design system context" versus "agent reading files cold" is large enough that it felt more like crossing a threshold than an incremental improvement.

Below the threshold, agents generate code that appears plausible but requires significant correction. Above it, they generate drafts that a reviewer can actually build on.

An unexpected outcome: design-led prototyping

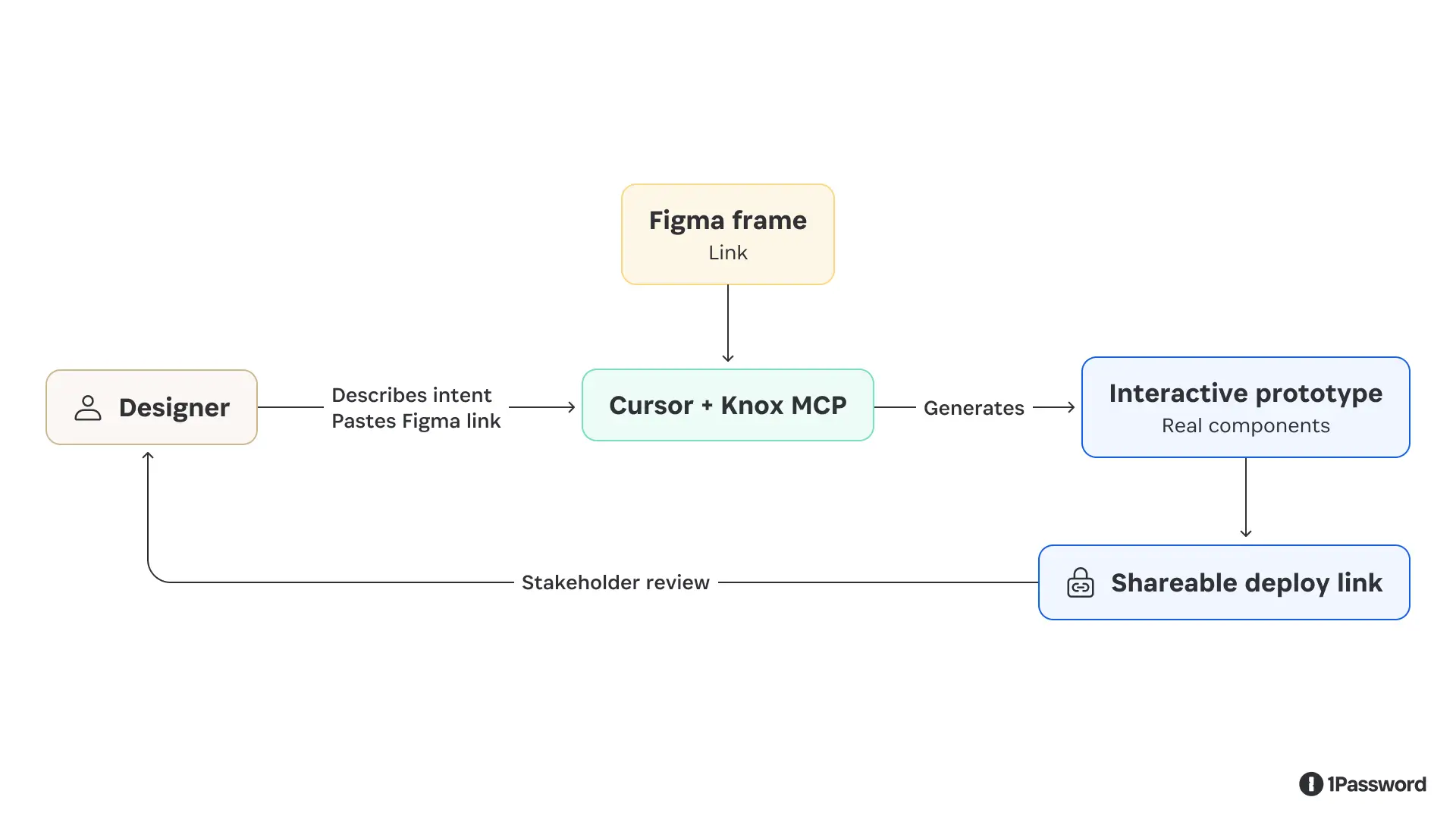

While building the ticket-to-PR pipeline, another question came up: could we give designers the same setup our engineers use for rapid prototyping?

Using the MCP-backed Knox context, we built a prototype playground with prebuilt product templates, an agent to query components, and a simple slash command to scaffold a new prototype from scratch, integrating guidance directly into the user workflow.

A designer describes what they want to build or links to a Figma frame, and the agent generates a working interactive prototype using real design system components ready for iteration and feedback. They share it with a deploy link.

This changed a workflow that previously required developer time into something a designer could run on their own.

A stakeholder review that used to mean a static mockup or a time-consuming Figma clickthrough could now be a clickable prototype built with the actual component library, matching the product's fidelity and interactions.

A few things we learned here that we didn't expect:

Smaller tasks produce better results than large ones ("build the sidebar" before "build the entire dashboard")

Naming components specifically ("use the secondary neutral button") beats describing the desired appearance

Detailed Figma component annotations (size, padding, intended behavior, and states) translate directly into better agent output, because the agent reads that documentation the same way a developer would

What we'd do differently

Ticket quality is not automatable. The agent is a strong implementer of well-specified work and a poor interpreter of ambiguous requirements. The qualification step (a human deciding whether a ticket is genuinely ready) is the most important step in the pipeline, and it can't be delegated to the agent.

Start with the narrowest possible scope. Our early instinct was to write a single "implement a design system ticket" skill. What actually worked was breaking it into eight focused skills that the agent could compose as needed. Narrow skills are easier to maintain, easier to debug when something goes wrong, and easier for the agent to execute correctly.

Treat agent credentials the way you'd treat any machine credential. The design system MCP disconnects after a fixed window, making an agent credential that persists indefinitely a liability. Issuing short-lived, scoped access for agent workflows isn't a UX inconvenience. It's baseline security practice, and it's consistent with how you'd handle any other automated system that has access to your codebase.

Vercel’s design system tooling powers some of the most widely used component libraries in production. Andrew Qu has been tracking how teams are starting to embed agents directly into that layer:

"The gap between design and production has always lived in the component library, where intent either survives or gets lost in translation. With Generative UI, the component library stops being the end of the handoff and starts being the substrate the model renders from. When the model is grounded in what your components are and how they behave, it stops generating one-off UI and starts generating things that belong in your product.”

–Andrew Qu, Chief of Software, Vercel

Design system work will always require human judgment on the questions that influence your product. What's changed is the ratio of that judgment work to the implementation work that follows it.

Agents are increasingly handling the latter. The point is to free the people who understand the system to focus on the work that actually requires human judgment.

Subscribe to the 1Password Developer newsletter

Stay up to date with the latest 1Password Developer product news, industry insights, and community contributions. Plus, learn best practices for becoming a better, more secure developer – both at work and at home.

Subscribe2026-05-19, 00:00

Authentication is built on the assumption that identity can be verified once and trusted for a specified period. Over time, the security industry has gotten very good at validating that trust through a chain of identity providers, certificates, and infrastructure that confirm that a user is who it claims to be at login. Authentication assumes that identity and intent will stay relatively stable and predictable because it was designed for people whose behavior is largely stable and predictable.

Agents break that assumption entirely. They act non-deterministically, starting with one task and expanding their scope as they work, accessing new files and APIs, making their identities difficult to track. When an agent acts autonomously on a person's behalf, the question is no longer whether it can log in; it's how it uses access after it does.

To establish a control plane for agents, Nancy asks, “If you’re a CTO and you’ve been told to deploy internal agents into production, what are the no-excuses minimum controls for identity, authorization, secrets handling, and audit?

Fotis Chantzis, Agent Security Lead at OpenAI, joined Zero-Shot Learning, 1Password’s AI builder podcast, to talk through why the protocols built for human identity don’t hold up under those conditions, and what teams can do to secure agents in production.

Static authorization fails when agents get new ideas

Continuous authorization is the practice of evaluating and enforcing access permissions at each step of an agent's workflow, rather than granting access once at the start of a session.

OAuth and OIDC assume relatively stable scopes and front-loaded authorization decisions. A user signs in, approves access once, and the system moves forward with that grant.

But agents make decisions and take actions beyond the original intent of the person who authorized them.

As Fotis says, "There is no concept of continuous authorization that agents require because an agent starts with one task and then decides that it needs to do something else."

For example, a coding agent might start by accessing local files, then decide mid-task that it needs to browse the web for API documentation. At that point, it writes a new task and downloads the documentation file. Nothing was re-evaluated to determine whether that change should be allowed. An agent can take dozens of these actions in seconds, adding new tools and risk with each move.

A functional identity model for agents must continuously evaluate access as the workflow evolves. Otherwise, teams face the familiar tradeoff of blocking too much and slowing work, or approving too much and holding their breath.

At 1Password, we see the value in continuous, workflow-aware authorization, where access is brokered at runtime, scoped to each action, and enforced at each step through a control layer that mediates how credentials are used.

Nancy framed this as a question of how authority moves between users, agents, and tools: “This brings us to the concept of delegation chains and how we should think about them, scope, duration, thresholds, and the systems those agents are allowed to access.”

Attribution has to survive across tools, runtimes, and sessions

Attribution is the ability to trace every action an agent takes back to the human who initiated it and the authority under which it ran, across every system the agent touches.

Nancy framed the operational challenge directly asking, when an enterprise needs to investigate an incident or audit access, how does it determine which agent actually accessed a system or dataset, and under whose authority?

For agents, attribution breaks as work moves between systems because each step is recorded separately, severing the connection to the original user or task.

Without attribution, we lose governance.”

–Fotis Chantzis, Agent Security Lead, OpenAI

In an incident response scenario, teams work backward from logs to reconstruct what happened. With agents, that quickly becomes difficult. The agent may start in one environment, then call multiple systems, each logging events separately and without shared context.

In one system, the action might appear under a user identity. In another, it shows up as a service account. In a third, it’s tied to an API token. Each step appears valid on its own, but the connection between them isn’t preserved.

Investigators can see the individual steps, but not the full chain of actions or who was responsible for them.

Nancy connected this to a growing need for execution traces that can compare an agent’s intended plan with what it actually did, step by step, across prompts, tool calls, and outputs. For auditing, this proves that the agent operated within the bounds of what it was supposed to do.

A stronger approach preserves attribution at each step, so every action can be traced back to its initiator and the authority under which it was performed.

That shift from reconstructing activity to proving it changes what’s possible in audit and in policy enforcement.

Authority has to be mediated

Mediated credential use means routing an agent's access through a controlled layer (a proxy, gateway, or injection layer) that binds credentials to specific destinations, rather than passing the underlying secret to the agent directly.

The most immediate risk from continuous agent action is how the systems handle credentials.

It's essentially game over if a credential ends up in the context window of the agent."

–Fotis Chantzis, Agent Security Lead, OpenAI

Once a secret is exposed to the model, it introduces the risk of credential exposure, whether through a prompt-injection attack or other, less malicious means. Handing an agent a credential isn't effective delegation.

The alternative is to mediate access rather than hand it over. Systems can route access through controlled infrastructure, such as proxies, gateways, or injection layers, that bind credentials to specific destinations and enforce their use. The agent can request access, but never holds the underlying secret. A compromised agent may still attempt unintended actions, but has far less freedom to abuse the authority granted to it.

The minimum control plane before deploying to production

In the episode, the hosts agreed that the control plane, the system that enforces how access is used across identities, tools, and actions, has to persist as agents act, across systems, over time, and through changing intent.

The baseline looks different from human access controls:

Credentials have to be short-lived and scoped to the task, not granted broadly and reused

Execution has to be constrained by the environment, not assumed to behave

Secrets can’t be exposed to the model; they have to be mediated at the point of use

Every action has to be attributable back to both the agent and the human who delegated it

Policy has to be enforced continuously, so intent drift is detected before it becomes an incident

Authentication still matters, but it can’t carry the full load. Identity tells you who delegates an agent; it doesn’t control what happens next.

Teams can't wait for standards to catch up

But IT teams don’t have the luxury of waiting. Agents are already operating in production.

Agentic security is still a moving target. To secure agents today, teams need continuous authorization, attribution, and mediated access. The standards agents will rely on around identity, delegation, and authorization are still evolving. Extensions to OIDC, verifiable credentials, and cross-provider delegation models are in development but not yet ready.

In the meantime, most teams aren’t waiting for a perfect model. They’re adapting existing controls, tightening credential lifetimes, introducing mediation layers, and treating agents as first-class machine identities with explicit boundaries.

Watch the full episode with OpenAI’s Fotis Chantzis

Fotis, Nancy Wang, and Jeff Malnick go deep on continuous authorization, attribution, and what it takes to secure agents in production on Zero-Shot Learning, 1Password's AI builder podcast.

Watch now2026-05-18, 00:00

AI coding tools have changed who builds software. The barrier to entry has dropped to the point where a designer, an analyst, or a first-time founder can turn an idea into a working app in an afternoon. That shift is real, and it's accelerating.

But every app needs to talk to something. Every API call, database connection, and automated workflow runs on secrets: API keys, tokens, SSH keys, service account credentials. And those secrets have to live somewhere.

For most people building with AI tools today, secrets end up in a .env file, a chat message, a script, or a note that will "definitely get cleaned up later." AI coding tools are good at helping you get something working fast, but they tend to suggest the fastest path to a functioning prototype, not the most secure one. The result is real credentials stored in plain text, scattered across machines and codebases, hard to track and easy for threat actors to find when a machine is compromised.

This is how credential sprawl starts. Not with a dramatic failure, but one unknowing shortcut at a time.

Developers rely on secrets management tools: Now AI builders need them too

Until recently, managing developer credentials was mostly a concern for engineering teams with the time and expertise to configure dedicated tooling. The people who generate secrets have historically been developers trained in secure coding.

Today, that's changed. Designers are prototyping internal dashboards. Operations teams are automating repetitive tasks. Data analysts are connecting pipelines to interactive graphics. Founders are shipping their first apps without engineering teams. None of them signed up to become cybersecurity experts, but they're now handling some of the most sensitive credentials and secrets in their organizations, often without a clear path to doing it safely.

The new wave of AI builders are frequently seeing directions from their vibe coding tool to either put plaintext credentials into a .env file on the computer desktop or store them in a secrets manager. The former is the most risky way to manage secrets, and the latter is the most secure. That is why every AI builder needs a secrets manager.

Secrets management tools are already in 1Password

1Password is where millions of people store their most sensitive information. What you may not know is that every 1Password subscription already includes a full set of developer security tools.

SSH Agent, the CLI, SDKs, environments, service accounts, and secret references are all part of 1Password. They let apps, scripts, and AI coding agents pull secrets from 1Password at runtime rather than hardcoding them into code or configuration files. Service accounts handle automation without requiring shared personal credentials. The CLI and SDKs mean good security can be part of the build process from the start, not something you retrofit when a prototype moves into production.

1Password's developer tools have been part of the product for years. But keeping secrets secure shouldn't require knowing which corner of the app to look in, whether you're a senior engineer, a data analyst, or someone who shipped their first app last month. Making these tools visible to everyone gives all builders the same starting point.

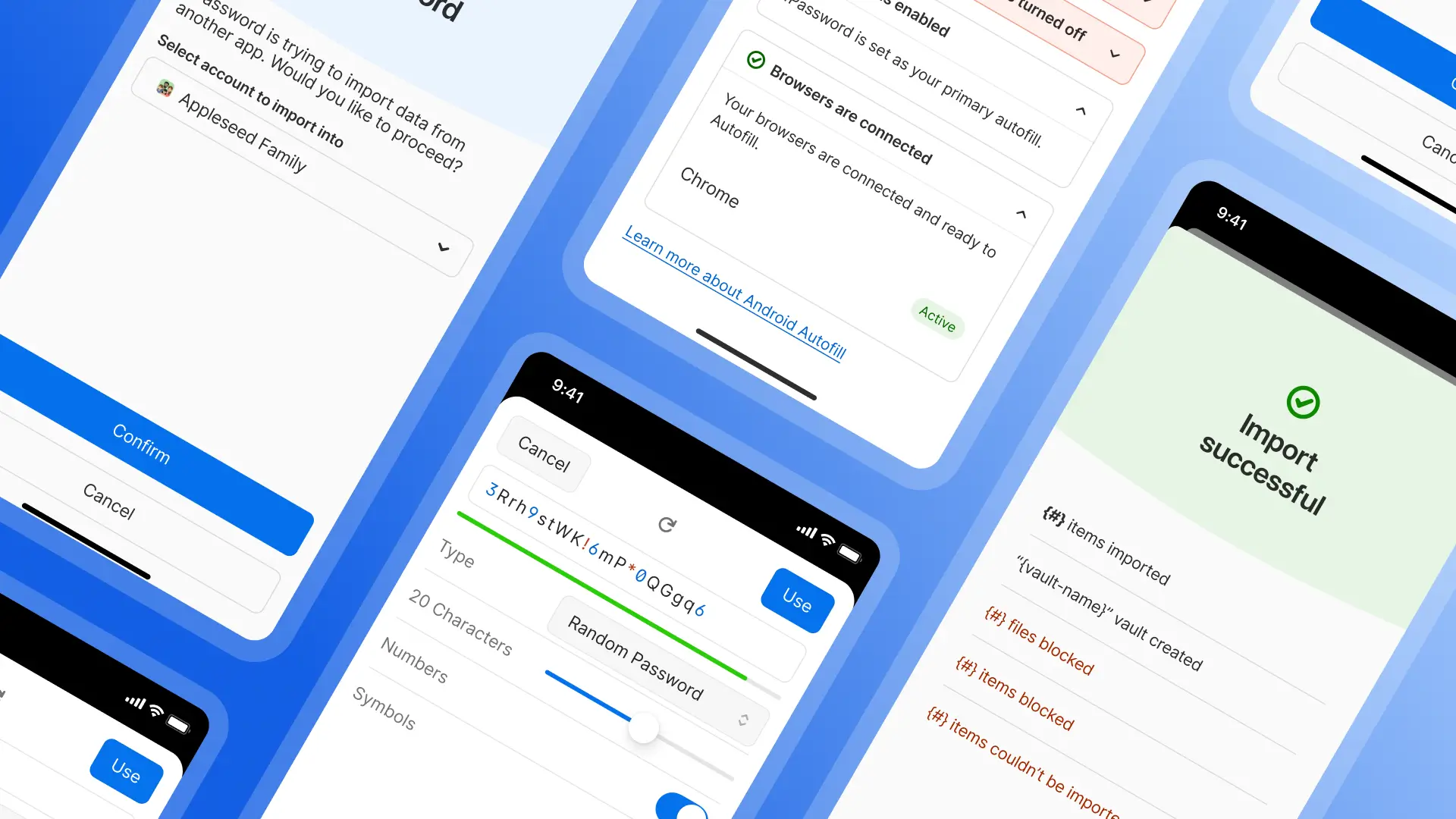

What's changing

Developer tools are now visible in the 1Password desktop app sidebar for all users, matching the experience already available in the browser extension.

We've also rebuilt our developer documentation. The new quick start guides are organized around what you're trying to do, not how the product is structured:

Developer quickstart: common setups, step by step

Admin quickstart: what's available and how to roll it out across your organization

Workflow guides for SSH and Git, developer secrets, deployments, AI access, and building integrations

Admins retain full control over how these features are used across their organization.

How you can use 1Password developer tools today

With 1Password developer tools, you can already:

Store and use SSH keys without keeping them on disk

Keep secrets out of code and .env files using 1Password environments and secret references

Use the CLI and SDKs to access credentials at runtime, including from AI-assisted build workflows

Create service accounts for automation instead of sharing personal credentials

Connect secrets into CI/CD pipelines without exposing them

These tools are included in your existing subscription. There's nothing additional to buy or deploy.

The secure path should be the obvious, easy path

AI tools have made building faster than it's ever been. The cost of that speed, if we're not intentional about it, is secrets scattered across machines, codebases, and chat logs that nobody is tracking, and credentials that remain valid long after a prototype becomes a production system.

1Password was built on the idea that security works best when it's the easy choice, not an extra effort on top of the work you're already doing. Making developer tools visible is a small change in the interface with a clear purpose: make the secure path the obvious one, so more builders will take it.

If you want to see how this fits into your team's development workflows, join us on June 10th for a live webinar on developer credential security. Or check out thequick start guides and see how it fits into what you're already building.

2026-05-14, 00:00

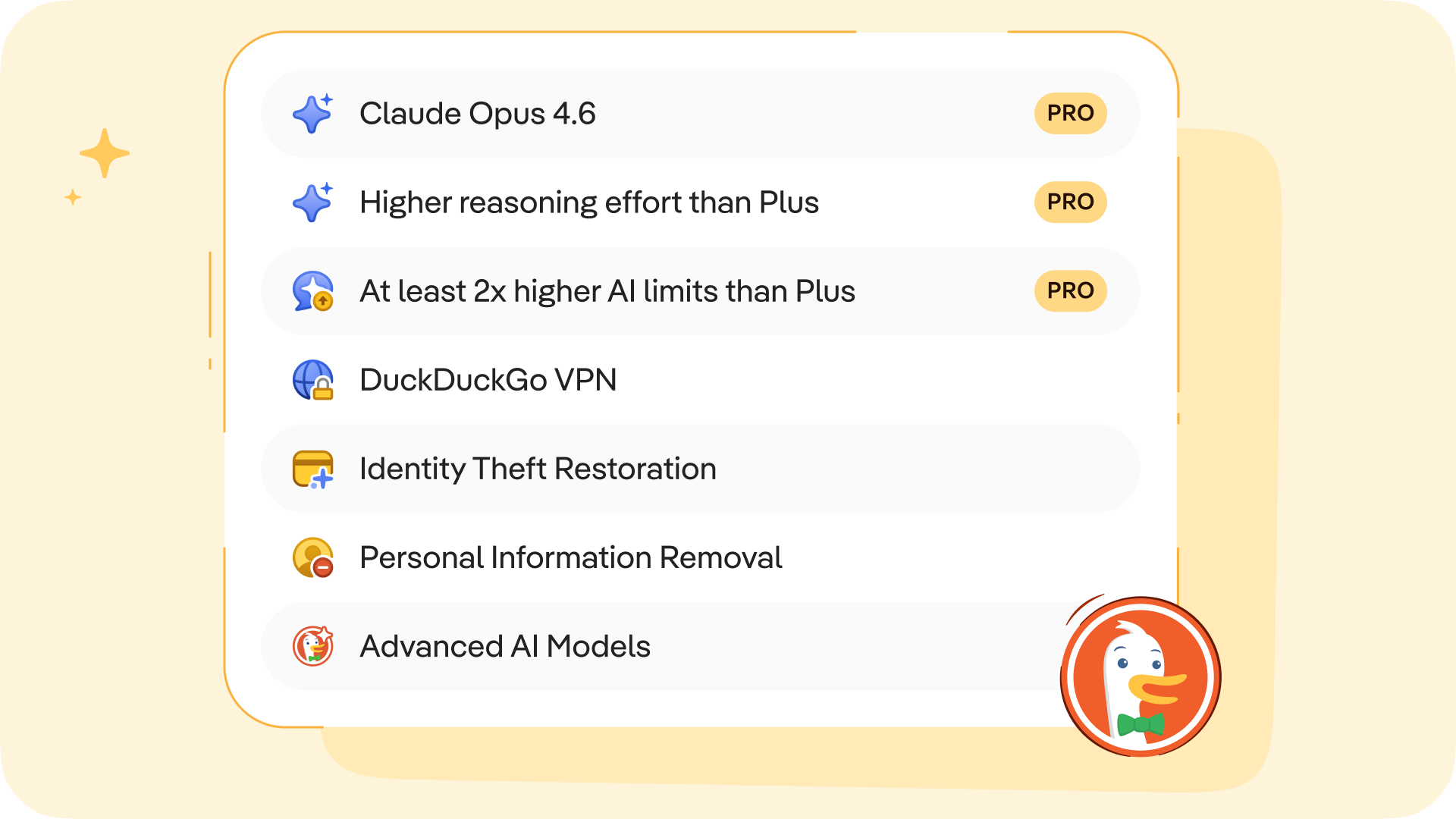

Today we're releasing the 1Password Device Trust MCP Server, an open-source server that connects your Device Trust data directly to the AI tools your team already uses, like Claude or ChatGPT. It's available now for all customers on Device Trust Connect.

As AI agents take on more of the work across your organization, IT and security teams need visibility and control that keeps pace. The Device Trust MCP Server is part of how 1Password is extending that control to the way security teams actually work today, inside AI tools, in plain language, with every action logged and auditable.

Once it's running, you can query your entire device fleet without leaving your AI client. Which devices have disk encryption off? Who owns the machines failing compliance checks? How long does it typically take to resolve a specific issue across the fleet? Instead of navigating dashboards or writing custom scripts, you just prompt.

What is MCP, and why does it matter?

If you use AI tools like Cursor or Claude, you may have already come across the Model Context Protocol (MCP). MCP has become the standard way to connect LLMs and AI agents to data sources and tools. It’s an open standard that lets AI tools connect to external data sources and take action on your behalf, with built-in controls over what those tools can access and do. It's supported by every major AI platform, and the ecosystem has grown from around 1,200 servers in early 2025 to over 6,400 today. IT and security practitioners are increasingly doing their work inside AI-powered tools, and MCP is what makes those tools useful for real administrative workflows.

The Device Trust MCP Server plugs your device security data into that ecosystem. Instead of switching between tools, admins can stay in their AI client of choice and get answers in seconds.

What you can do with the Device Trust MCP

Once connected, you can ask questions like:

"Which devices are currently failing checks?"

"Who owns the devices with disk encryption disabled?"

"Which of my devices are vulnerable to this CVE?"

"Which devices have the most Chrome extensions installed?"

"Show me all macOS devices running outdated versions of ChatGPT."

"What's the average time to resolve issues for this check?"

The server covers the full Device Trust API surface across 59 tools, including devices, people, issues, checks, audit logs, live queries, exemption requests, and reporting tables. Smart features like auto-pagination, field projection, and device-owner enrichment make it easy to pull complete, clean answers without extra steps. And because it's part of the broader MCP ecosystem, it compounds with your other AI integrations, combining device data with security intelligence, identity, or ITSM sources to answer questions no single tool could on its own.

How the Device Trust MCP server works

The MCP Server runs locally on your machine and binds to localhost by default, so your Device Trust data stays in your environment. Setup takes a few minutes and boils down to three steps:

Clone the open-source repo

Set your Kolide API key and MCP authentication (bearer) token as environment variables

Start the server and connect your AI tool (Claude, Cursor, or any MCP-compatible client)

From there, your AI tool handles translating natural language questions into the right API calls and returns clean, human-readable answers. Every invocation is logged for auditability, and all endpoints require bearer token authentication.

Full setup instructions are available in this support document.

Built for the way IT and security teams work with AI

1Password Device Trust already detects AI tools running on your endpoints. Now it gives security teams AI-native tooling to manage those endpoints too.

This server is a part of 1Password's broader investment in AI across our product suite. It joins the MCP Server for 1Password SaaS Manager, which provides SaaS visibility and governance data to AI agents. Together they reflect one of 1Password’s bedrock security principles: AI agents should work with your data in a way that's useful, auditable, and secure, without ever exposing credentials or sensitive secrets.

You can get started with 1Password Device Trust MCP Server here, or learn more about Device Trust on our product page.

2026-05-12, 00:00