* [de] BITTE BEACHTEN SIE:

Sie verwenden einen veralteten Browser. Es ist unsicher und nicht mehr für moderne Webstandards geeignet.

Bitte aktualisieren (oder ändern) Sie Ihren Browser, um unsere Website so anzuzeigen, wie sie angezeigt werden soll.

Wir empfehlen die Browser 'Safari', 'Firefox' oder 'Brave'.

DANKE

—Klicken Sie, um diese Nachricht zu schließen—

* [pl] UWAGA:

Używasz przestarzałej przeglądarki. Nie jest ona bezpieczna i nie odpowiada nowoczesnym standardom sieciowym.

Zaktualizuj (lub zmień) swoją przeglądarkę, aby wyświetlać naszą stronę w sposób, w jaki powinna być widoczna.

Polecamy przeglądarki „Safari”, „Firefox” lub „Brave”.

DZIĘKUJEMY

—Kliknij, aby zamknąć tę wiadomość—

* [en] PLEASE NOTE:

You are using an outdated browser. It is unsafe and no longer suitable for modern web standards.

Please, update (or change) your browser to view our site as it is intended to be seen.

We recommend 'Safari', 'Firefox' or 'Brave' browsers.

THANK YOU

—Click to dismiss this message—

Nowości o bezpieczeństwiedlutek.com

Na tej stronie

znajdują się ważne nowości i ostrzeżenia na temat bezpieczeństwa i prywatności na Internecie!

(Cierpliwości – ładowanie tej strony zabiera kilka sekund.)

Tutaj przedstawiam najnowsze wiadomości, ostrzeżenia i porady na temat bezpieczeństwa i prywatności na internecie. Tylko wy sami możecie zadbać o własne bezpieczeństwo i prywatność, a to wymaga wiedzy, strategii i stałej czujności.

Obecnie mamy tylko kanały informacyjne w języku angielskim. Jeśli znasz jakieś polskie lub niemieckie źródła wiadomości na ten temat, prześlij mi ich adresy internetowe, a ja spróbuję dodać je do tej strony.

(Na stronie POLITYKA PRYWATNOŚCI znajdziesz moje zalecenia dotyczące szerokiej strategii ochrony komputera przed hakerami.)

ZASTRZEŻENIE:

- Artykuły tylko po angielsku.

- Prawa autorskie należą do autorów poszczególnych artykułów.

- Linki prowadzą do artykułów na zewnętrznych stronach internetowych, gdzie najprawdopodobniej będziecie śledzeni.

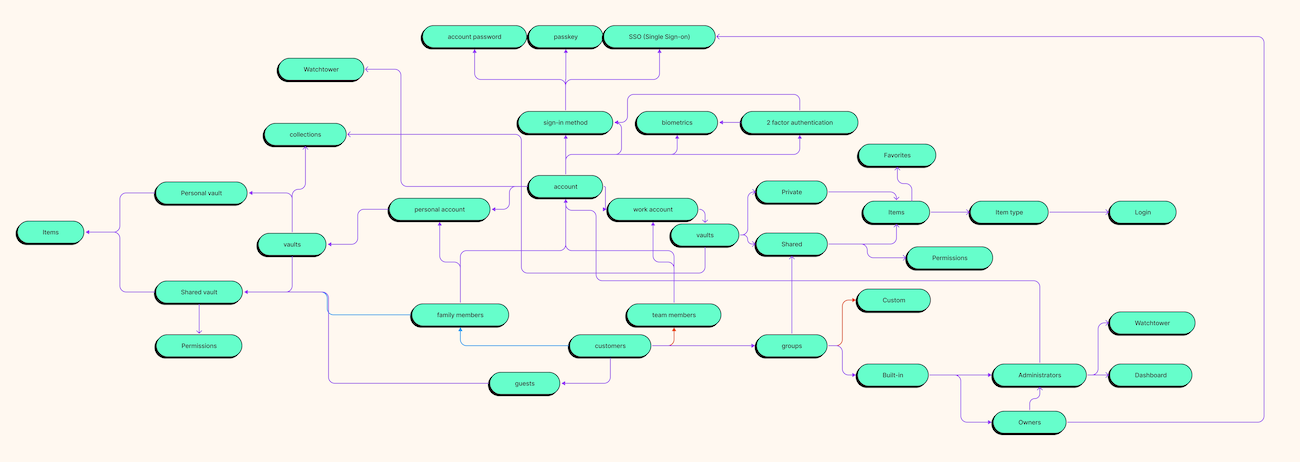

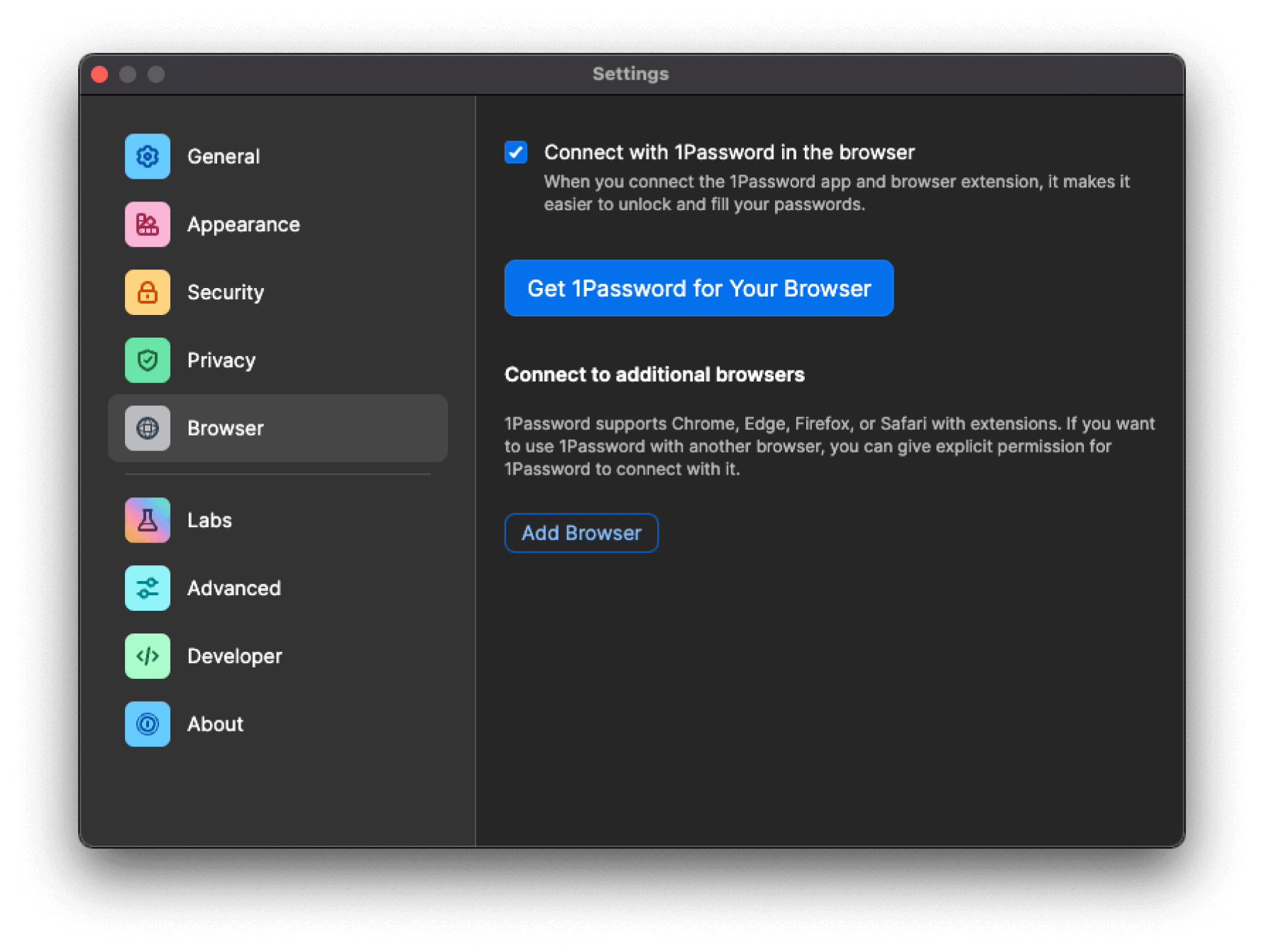

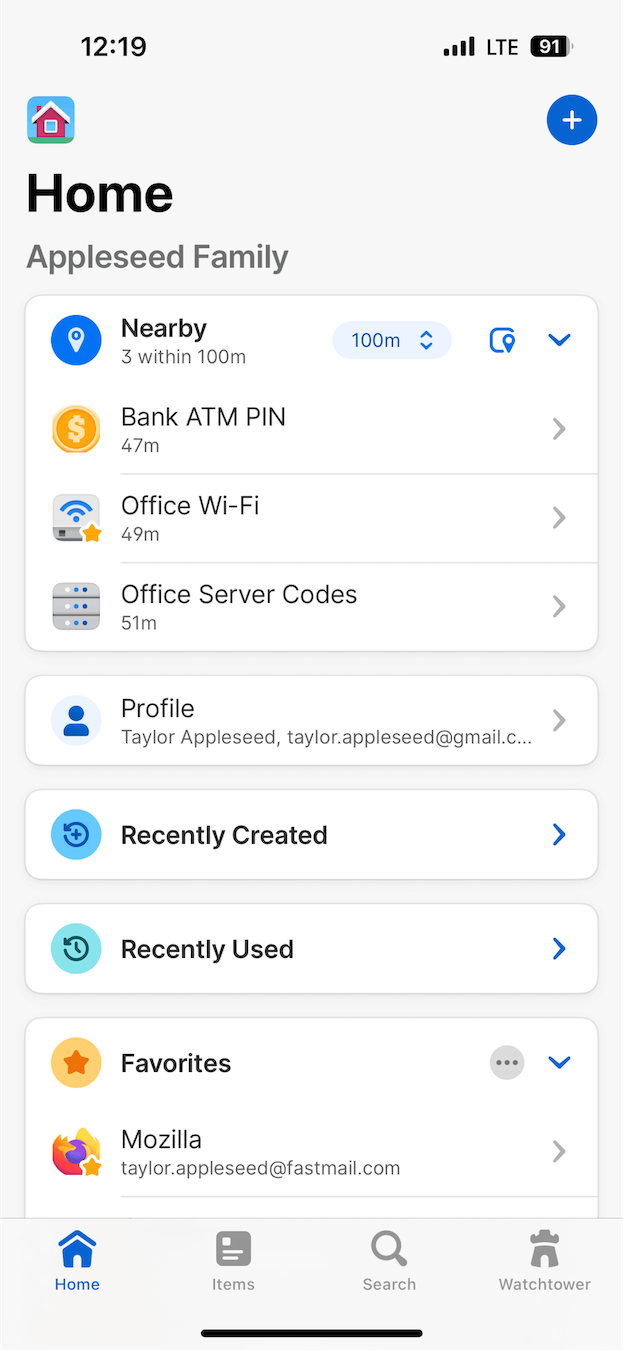

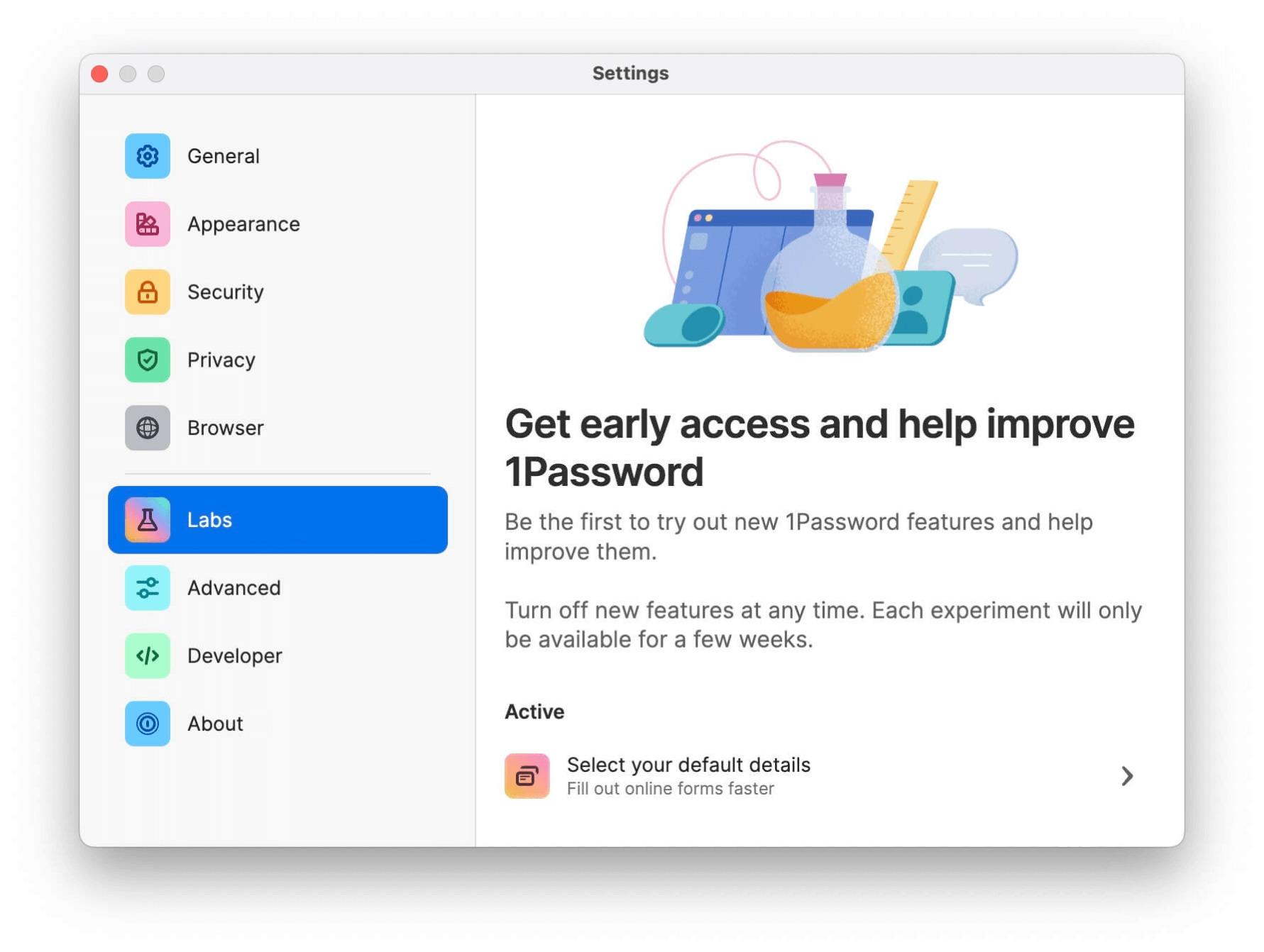

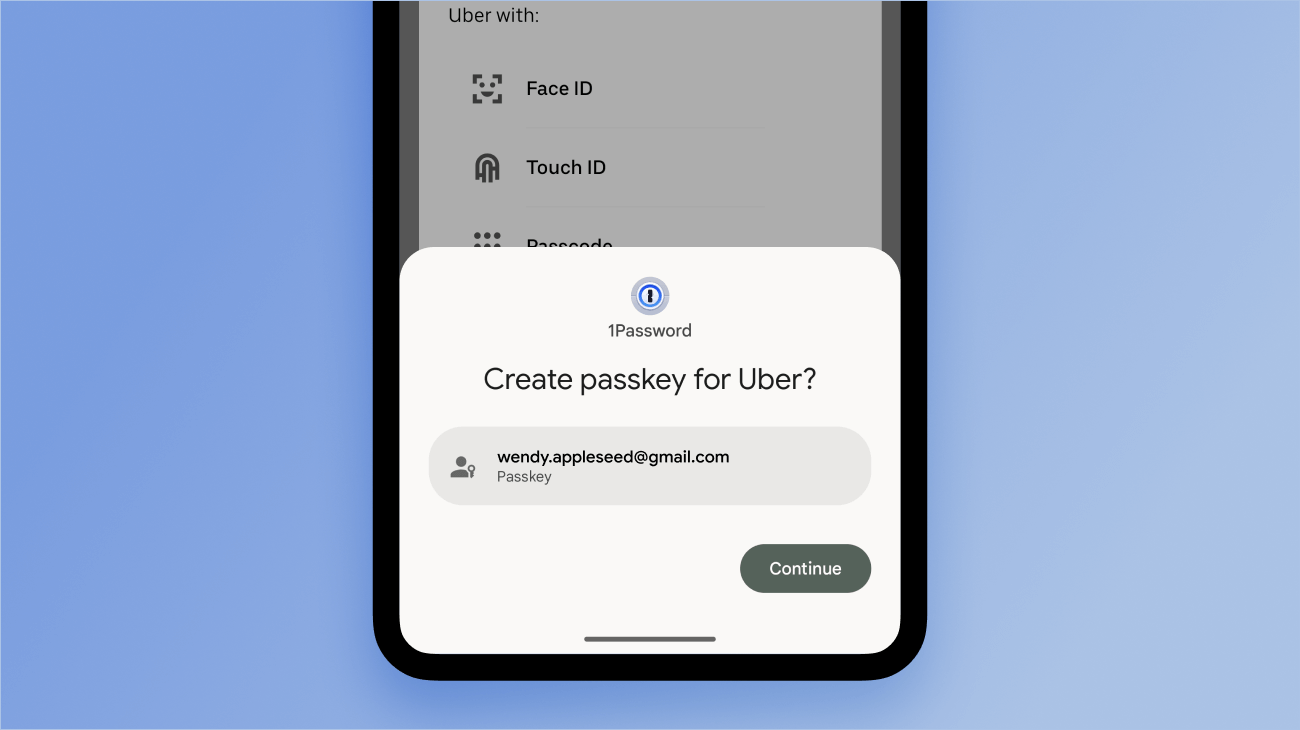

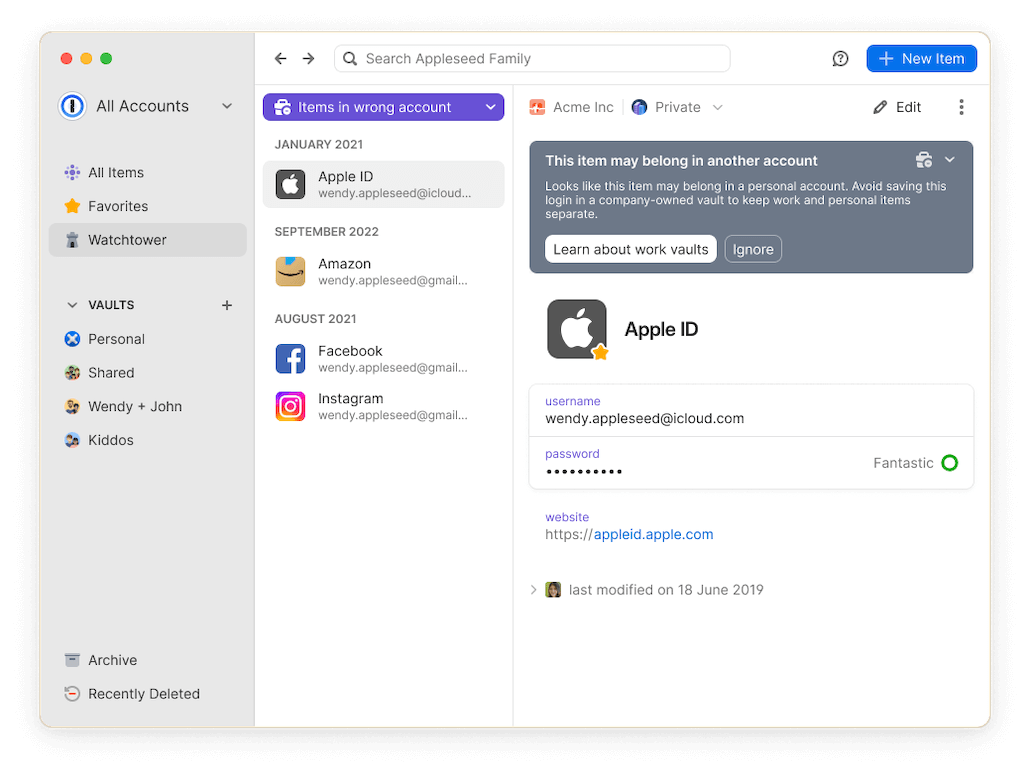

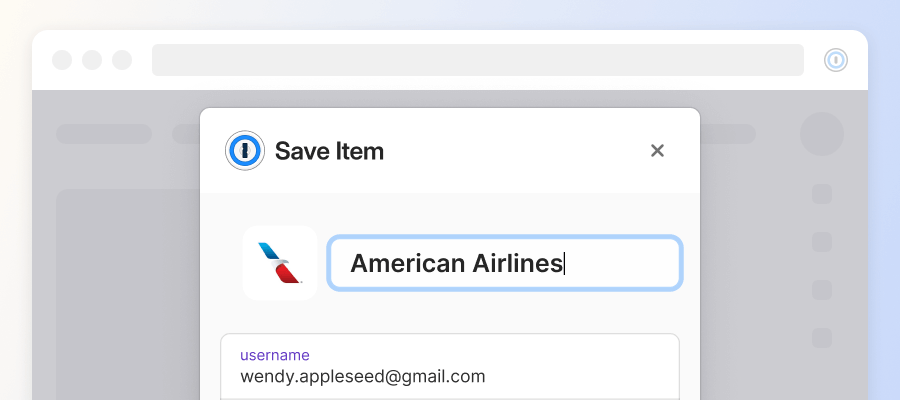

1 PASSWORD Managerby Agilebits SoftwareWide range of security and privacy topics.

2024-04-17, 00:00

It’s a concern for families everywhere: keeping kids safe online. For parents with teenagers, there’s the added complication of trying to balance a child’s safety with their right to privacy. But is online safety just families’ problem?

Policy advocate Stephen Balkam says everyone – including government, technology companies, law enforcement, and individuals – has a role to play. He thinks about these issues a lot as the founder and CEO of the Family Online Safety Institute (FOSI), a nonprofit that brings together government, industry, academia, and nonprofits to innovate around public policy, industry best practices, and digital parenting.

He chatted with 1Password’s Michael “Roo” Fey on the Random but Memorable podcast about how parents should approach online safety with their kids. Balkam also discussed the emerging threats to children’s online safety, parental rights and children’s rights, and how kids can always find a workaround to get online.

Want to learn more? Read the interview highlights below or listen to the full podcast episode.

Editor’s note: This interview has been lightly edited for clarity and brevity. The views and opinions expressed by the interviewee don’t represent the opinions of 1Password.

Michael Fey: What is the Family Online Safety Institute’s mission?

Stephen Balkam: To make the online world safer for kids and their families. We don’t say the word “safe” because there’s no such thing as 100% safe, but we can definitely make it safer. We do it through what we call the three Ps: Policy, Practices, and Parenting.

Enlightened public policy is what we try to persuade our friends on Capitol Hill of, in the state capitals, London, and Brussels. Public policy laws and regulations that are based and grounded in research and not in banner headlines from the Daily Mail or something like that. We work with policymakers on both sides of the aisle. We’re nonpartisan and do our best to encourage the emergence of good legislation.

“We’re nonpartisan and do our best to encourage the emergence of good legislation."

We also talk to the regulators. We sit down with the FTC a great deal, Ofcom in the UK, and eSafety Commissioner Julie Inman Grant in Australia. These are the folks who actually have to enforce the laws as they are created. We work with them and provide a conduit to the technology companies, and vice versa, so there’s better understanding of the work they’re doing.

The second P refers to industry best practices. We work with our members to up their “trust and safety game”, if you will, and act under NDA as constructive critics of their products and services. To that end, we’ve worked with a number of the brand name companies to try to get them to put more resources behind the safety of their products.

The third P is an initiative we call Good Digital Parenting. We take everything we’ve learned from the laws and regulations, add that to the products and services that the tech companies are providing, including filtering tools, security devices, and so on, and translate that into easy-to-use language for parents.

“We have something called ‘The Seven Steps to Good Digital Parenting.’ You can put that on your fridge."

We have something called The Seven Steps to Good Digital Parenting. You can put that on your fridge to remind you to keep talking with your kids, to set ground rules, and to be a good digital role model yourself.

MF: How has your work evolved over the years? And what do you see as the most pressing challenges and emerging threats to children’s online safety today?

SB: When we started we only had two Ps: the policy side and the industry best practices side. Within a few years, we could see there was a real need to help parents. We call it empowering parents to confidently navigate the web with their kids.

All of the issues that people have become familiar with – cyberbullying, sexting, overuse, oversharing, and screen time – these have been really vexing questions over the last decade or so.

I would say over the last year or two, the emergence of generative AI through ChatGPT and other products has just exploded onto the scene and caused a new wave of issues, concerns, fears, and excitement. It’s why we decided to do a year-long research project on it last year.

MF: Tell me more about that. What have the findings been? What did you set out to discover? What was the focus of the project?

SB: We looked at parents and teens in the U.S., Germany, and Japan to find out their experience of generative AI. That incldues their concerns, their biggest fears, their biggest hopes, and just generally their attitudes toward it.

Surprisingly, it was the first time where the kids admitted that their parents knew more about generative AI than they did. Every time we’ve looked at anything from social media, the use of Snapchat, Instagram, and in the early days, Facebook, teens were far ahead of their parents in terms of usage knowledge.

“The kids admitted that their parents knew more about generative AI than they did."

But with GenAI, we found something really interesting. I think it’s because a lot of parents were already using ChatGPT and similar products for their work. And not surprisingly, they were quite concerned about generative AI taking over their jobs, so they really got in deep.

In terms of what parents were concerned about for their own kids, it was that they wouldn’t develop critical thinking skills in the way that they had to, going through school and college and into the workforce. They were concerned their kids would just have their essays written for them by AI.

When we asked teens about their biggest concerns, ironically, given that they’re not in the workforce yet, their biggest concern was whether there will be jobs for them when they do get into the workforce.

“The biggest concern [for teenagers] was whether there will be jobs for them when they get into the workforce."

Also, the use of generative AI tools to create images and videos to cyberbully – that wasn’t a concern for parents, but it was definitely one for teens. That’s a huge concern if you’re still at school.

MF: FOSI aims to create a culture of responsibility in the online world. What role do you see individuals, tech companies, and policymakers playing in fostering that safer digital environment for children?

SB: If you can envision a large circle, at the top of the circle would be government. Government definitely has a role to play in setting the rules for what is allowed and not allowed online.

It’s a complicated role, particularly in the United States, where we have the First Amendment. We have this tricky balance between rights of privacy and safety. It’s not easy legislating in this space but the government has a role to play in providing a legal framework and to urge folks to do more and better in this space.

“The government has a role to play in providing a legal framework and to urge folks to do more and better in this space."

Law enforcement is also part of this picture and part of the circle. For the really heinous stuff, we need well-resourced law enforcement to go after the bad actors. In many cases, law enforcement does not have the resources it needs, but even so, it’s part of the picture.

It’s also not acceptable for industry just to put out tools and products and services without thinking about online safety. They definitely have a role to play. When I go and talk to VCs, I say: “It’s great you have a gifted CEO and a fabulously skilled CTO, but who’s your chief online safety officer? Let’s make sure you bake that in.” Safety by design, if you will.

Parents, teachers, even the kids themselves, have a responsibility for maintaining safety online. We encourage parents to use parental controls. When kids hit high school, the emphasis shifts to being more of a co-pilot with your teen and working with them so that they utilize the online safety tools that have been created for them – to report, block, be private, and in many ways, shape or administer their online lives.

“When kids hit high school, the emphasis shifts to being more of a co-pilot with your teenager and working with them so they utilize the online safety tools that have been created for them."

And then teachers, of course, have a huge role to play in terms of giving online safety advice or lessons and modeling how to be not just safe, but civil online as well.

MF: There seems to be a real interplay between parental rights and children’s rights at the moment. Can you talk about that?

SB: I should have said right at the front that FOSI is an international non-profit. What I often notice in Europe is there’s a far greater emphasis on children’s rights and teens’ rights to access content, gather online, and express themselves. And also a right to be safe when they’re online. Here in the U.S., we tend to emphasize parental rights, and that often has pretty heavy connotations with it, particularly in certain states.

Parents, particularly those who have younger children, absolutely have the right and the responsibility to keep their young kids safe online and use parental controls. But things shift in the teen years. Kids, at some point or another, start to have rights themselves, including rights of privacy and a right not to be surveilled by their parents while they’re online.

“Kids, at some point or another, start to have rights themselves, including rights of privacy and a right not to be surveilled by their parents while they’re online."

Are we saying that kids, until they’re 18, have zero rights? And then, once they hit 18, inherit 100% rights? Or is there a gradual curve upwards? Not surprisingly, our organization argues that kids have rights as they age, and it’s a gradual curve.

It’s not an easy thing. It’s not something you can point to and say: “Absolutely this is the point at which they have X, Y, and Z rights.” But it is a commonsensical thing and also a realization that 15-, 16-, 17-year-olds will have the ability to circumvent whatever you try and put in their way.

MF: How does FOSI educatie parents about online safety? What are the key principles or tips you have for parents?

SB: We developed the seven steps to condense all of our various messaging and advice. It boils down to: Talk to your kids. That talk should be done early and often.

When I say early, I mean as young as kindergartners. They can understand the word “bad”, they can understand the word “danger”, they can understand concepts like: “We’re not going to let you have this whenever you want it. There will be times when you can have it and times when you can’t. We’ll also set up some rules where there will be consequences if you misbehave.”

“Talk to your kids. That talk should be done early and often."

Laying all that out early is absolutely critical so the kid knows that when you act, you’re not doing it unfairly. It’s based on stuff you’ve already talked about. But it’s an ongoing conversation. You’re going to have to do it almost on a yearly basis.

Back to school is the time that we often suggest as a good time. “Look, you’re now going into third grade. We’re getting you this gizmo watch so that you can contact us and we can contact you, but no, you’re not getting a phone.”

Also, milestones, like: “You’re turning 13, you’re now legally able to go on to various social media sites, but maybe we’re not going to. We want to discuss each one in turn.”

And at 14 or 15, sitting down with them before they go back to school: “Now show me how you report something on Snap. Tell me how you’re remaining private on Instagram.” This co-pilot concept is about working with your kid to make sure they’re utilizing the tools that are there for them rather than you trying to lock everything down. So that’s number one. Talk with your kids.

Number three is use parental controls. We talked about that before.

Number seven, probably the most important, is to be a good digital role model yourself. The top complaint I get from kids when I work in schools is: “I can’t get my parents' attention. My mom is always on Facebook. My dad is always checking his email.” Put your own screens down and give your kids face time.

“The top complaint I get from kids when I work in schools is: “I can’t get my parents' attention. My mom is always on Facebook. My dad is always checking his email."

We talk about tech-free zones in the house. A tech-free zone includes the bedroom. We’re not fans of screens in kids' bedrooms. No screens at the table if you sit at the table for a meal. Tech-free time zones, so maybe you have a 9:00PM or 10:00PM curfew where everyone puts their devices in a closet to charge up overnight.

We say to parents at PTA meetings: “Raise your hands if you use your phone as an alarm clock.” And almost everyone’s hands go up. The next thing I say is: “Don’t. Don’t use your phone as an alarm clock.”

“Little kids love to jump in your bed in the morning. They’ll see that blue haze on your face and they’re going to want the same thing."

Because it’s the last thing you’re going to look at when you’re going to bed. It’s also the first thing you’re going to look at, and sometimes even before you’re brushing your teeth, you’ll be checking your email and your texts and the weather. And if you have little kids, they love to jump in your bed in the morning. They’ll see that blue haze on your face and they’re going to want the same thing. Kids will do what you do rather than what you tell them to do.

MF: Do you think that teenagers are often neglected in the conversation around online security and almost seen as something to be managed instead of someone to be included?

SB: Oh, for sure. That’s why whenever we can, we include teenagers in our surveys, in our research. It’s extremely important to hear from them because it’s their lived experience that will inform public policy, as well as the products and services that tech companies build.

MF: Where can people go to find out more about you, the Family Online Safety Institute, and the incredible work that you’re doing?

SB: Our website is fosi.org. We’re also on LinkedIn, X, Instagram, all the usual places. And we have a YouTube channel. You’ll find a number of quite amusing videos with actual parents and kids illustrating the seven steps.

Subscribe to Random but Memorable

Listen to the latest news, tips and advice to level up your security game, as well as guest interviews with leaders from the security community.

Subscribe to our podcast2024-04-16, 00:00

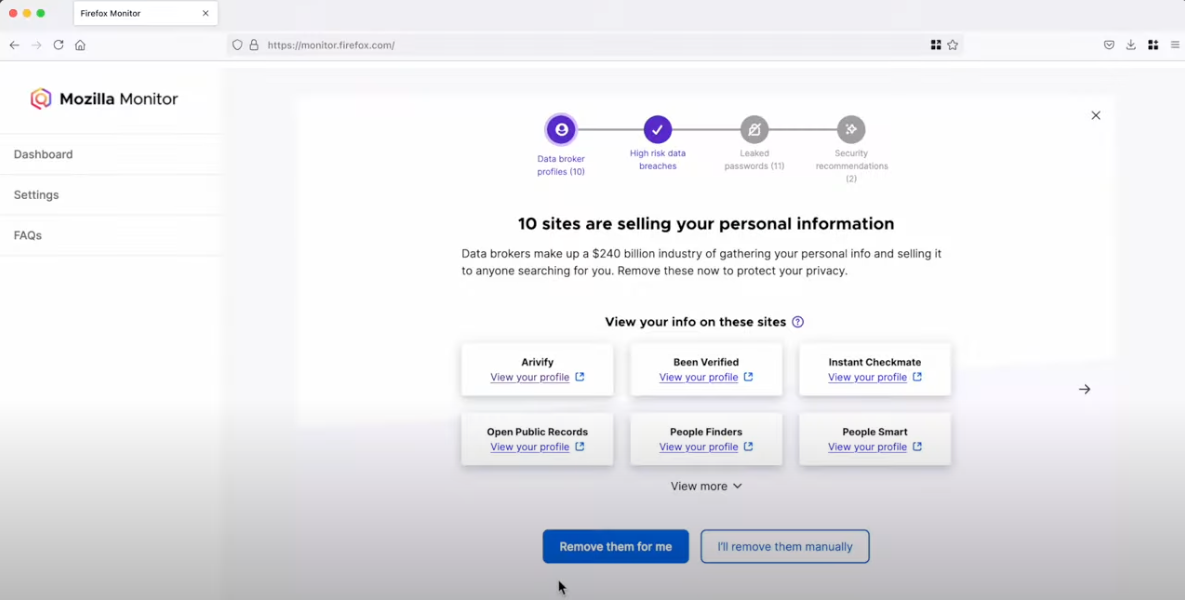

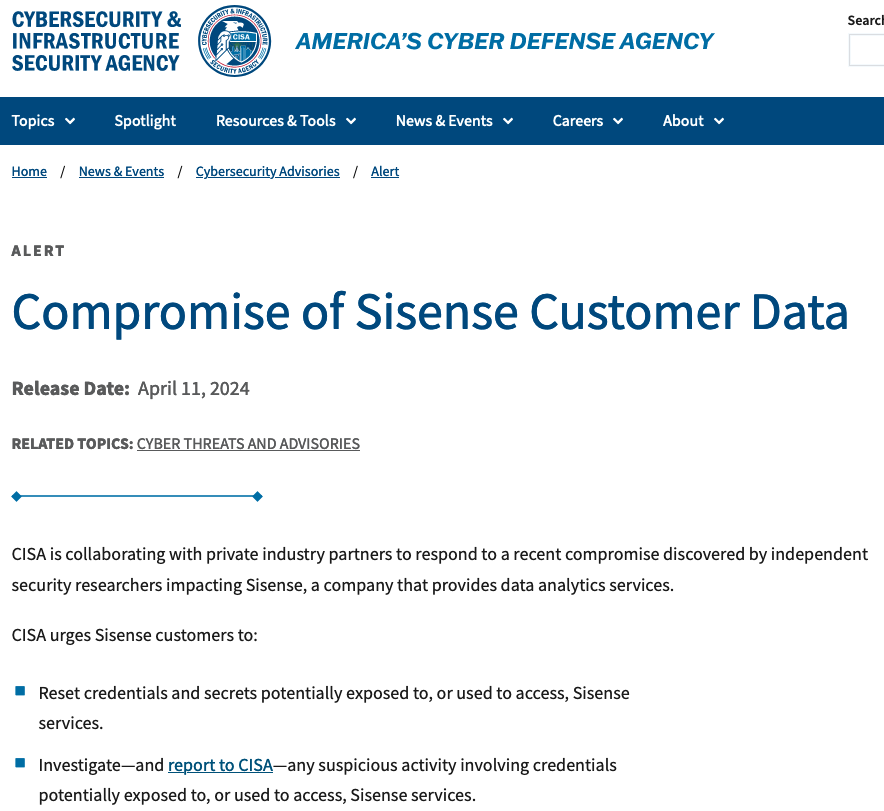

The U.S. Cybersecurity and Infrastructure Security Agency (CISA) recently announced that they are investigating a major breach at Sisense, a business intelligence company.

As a result of the breach, it is critical that Sisense customers take action immediately to minimize the impact of any breached credentials. Here is a quick overview of what happened, and a look at what needs to be done to secure your developer secrets to protect against follow-on data breaches.

What caused the Sisense breach?

According to reporting by Brian Krebs, attackers gained access to Sisense’s self-hosted GitLab environment. From there, they found an unprotected token that gave them full access to the company’s Amazon S3 Buckets. Once they had full access to the company’s cloud environment, they were able to copy and exfiltrate several terabytes of customer data, including millions of access tokens, passwords, and even SSL certificates.

Who’s impacted?

Exact details have not been published, however, it appears that over 1,000 companies (and possibly over 2,000) may have been impacted, ranging from startups to global brands. The company serves businesses in the finance, healthcare, retail, media & entertainment, software and technology, and transportation industries.

While the initial breach is severe on its own, it’s the potential for downstream attacks on companies and consumers that likely has CISA concerned. The stolen credentials could give the attackers access to additional cloud environments containing consumer information as they move downstream from their initial target to Sisense’s customers. Many of these credentials – SSL certificates, SSH keys, and API tokens – exist for an extended period of time by default. As a result, it is imperative that Sisense customers take action to secure their developer credentials.

Sisense breach: actions to take

Sisense has shared guidance with their customers about the types of credentials to rotate, including but not limited to account passwords, single sign-on (SSO) client secrets, database credentials, Git credentials, API tokens, and SSL certificates. Impacted customers should:

- Review guidance and communications from Sisense for the full list of impacted credentials.

- Audit and identify the most privileged credentials that protect customer data, especially personally identifiable information (PII) and personal health information (PHI).

- Begin rotating credentials, working backwards from the most privileged to the least privileged.

Lessons learned – and what to do going forward

Even if you were not directly impacted by the Sisense breach, it’s important to review your security posture, especially when it comes to developer secrets and devops environments. As we’ve written about in the past, businesses of all sizes struggle to protect developer secrets. Even sophisticated security and engineering organizations can fall victim to secrets leaks.

Here are some steps you can take to secure developer credentials:

Secure developer credentials like API tokens and SSH keys

Despite the privileged access developer secrets provide, they often do not have the same degree of protection as passwords, especially since IT and security teams can lack visibility into the health of these credentials. These types of developer secrets should be secured with end-to-end encrypted storage, like an enterprise password manager (EPM).

Use secrets references, even in dotenv files

It’s too easy to accidentally commit a secret, even if it’s added to an environment configuration file. The best defense is to use secrets references that can be replaced programmatically at run time.

Inspect Git commits for secrets

Although it is more effective to address the root causes of developer secrets leakage, businesses and organizations should inspect Git commits as a last safety check to make sure credentials are not accidentally committed to shared repositories. GitHub recently announced that they have turned on push protection for all public repositories, but this feature needs to be applied to all repositories, public or private, cloud or self-hosted.

Strengthen cloud infrastructure by blocking public access by default

Amazon S3 Block Public Access can help you make sure that your Amazon S3 buckets don’t allow public access. As of April 2023, block public access is turned on by default for all new Amazon S3 buckets. For any created prior to April 2023, the setting should be configured for your AWS accounts or within individual Amazon S3 buckets. Another preventative security measure for Amazon S3 buckets is to use IAM Access Analyzer to regularly monitor which buckets (and other resources) are accessible outside your account or AWS environment.

How 1Password can help

While organizations must react to this breach, the most effective solution to this type of breach is to implement the practices outlined in this post to secure developer secrets. To that end, 1Password provides an enterprise password manager (EPM) that secures developer secrets while simplifying the complexity of developer workflows.

1Password’s offerings provide critical secrets management functionality to prevent breaches caused by developer credentials, and are available in all 1Password plans:

Protect and manage developer secrets

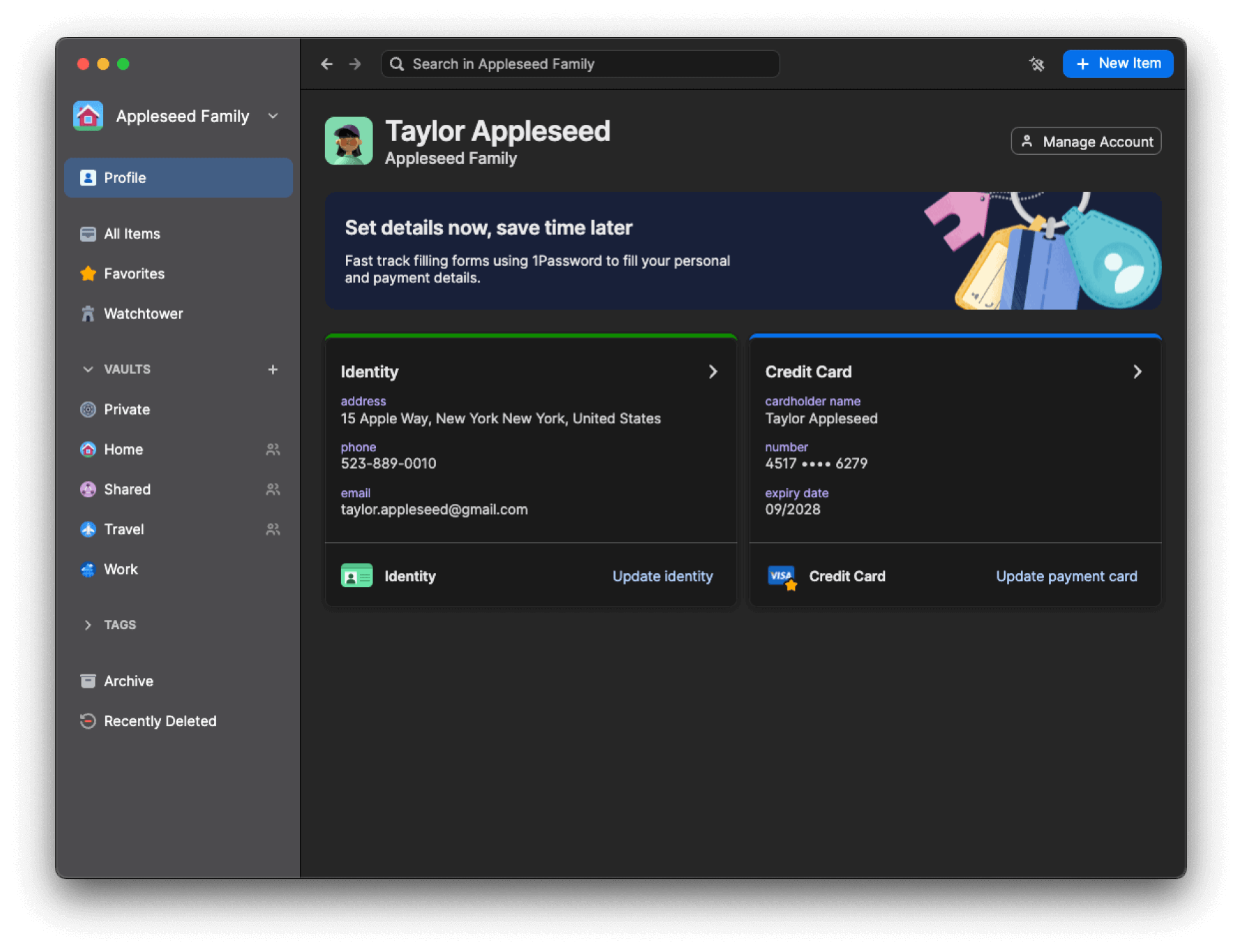

Store SSH keys, API tokens, database credentials, and more in 1Password’s end-to-end encrypted vaults. Use 1Password to generate, store, and biometrically authenticate SSH connections so SSH private keys are never saved as plaintext on your local disk.

Keep secrets out of code

Use the 1Password VSCode Extension to find secrets in your code as your work, one-click save them to 1Password, and then replace them with a secrets reference.

Securely deploy to production

Integrate 1Password with your CI/CD pipelines (GitHub Actions, CircleCI, and Jenkins) and infrastructure as code (IaC) tools (Kubernetes, Terraform, Pulumi, Ansible) to programmatically replace secrets at runtime.

While it’s not possible to prevent 100% of breaches, it is possible to empower software engineering teams and other employees with the tools they need to keep secrets safe.

You can get started with a 14-day free business trial, or by visiting our developer docs to learn more about how you can secure developer secrets.

Secure developer secrets with 1Password

Streamline how developers manage SSH keys, API tokens, and other infrastructure secrets throughout the entire software development life cycle with 1Password Business.

Try free for 14 days2024-04-11, 00:00

This is the final post in a series about shadow IT. In this series, we’ve detailed how and why teams use unapproved apps and devices, and cybersecurity approaches for securely managing it. For a complete overview of the topics discussed in this series, download Managing the unmanageable: How shadow IT exists across every team – and how to wrangle it.

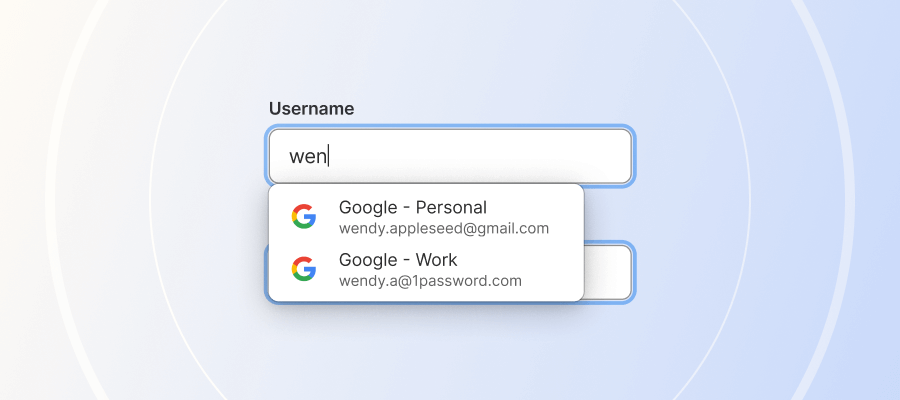

We all use passwords and other secrets to access things at work. It’s the IT team’s responsibility to secure those secrets. For most departments, secrets management needs are simple: They sign in to apps and websites with passwords, or passkeys, or sometimes with multi-factor authentication.

But developers have unique workflows and secrets management needs.

The types of secrets developers manage every day include SSH keys, database and API keys, server credentials, and other encryption keys. These keys power authentication methods developers use every day to access systems, integrate applications, securely transfer files, and more. To complicate matters, developer secrets often live outside IT’s purview.

That means developers are often left to manage secrets themselves, but that scenario can create serious risks for companies. A 2023 GitGuardian study revealed that in just one popular open-source repository used by developers, nearly 4,000 unique secrets were exposed across all projects. Of those unique secrets, they found 768 were still in active use. Separately, in the first two months of 2024, GitHub reported it found more than one million leaked secrets on public repositories, which translates to a rate of about 12 secrets leaked per minute during that time. That’s a lot of leaks!

Secrets management, in other words, is a growing problem. To make matters worse, the typical shadow IT concerns that plague non-developer teams apply to developers, too. That is, the passwords and credentials they use to sign in to apps and websites may not be secure – and IT may not even know about it.

The challenge, should IT and security teams choose to accept it: Secure encryption keys and other developer secrets no matter which apps and tools are being used – and do it without adding friction to already complex workflows.

Breaking down developers’ unique secrets management needs

Due to the nature of their roles, developers building software products have direct access to key systems and sensitive data. In addition, they need to work with secrets directly in their terminal, code editor, and deployment pipelines. Engineering teams may also need to share secrets for different applications, or when configuring their development environments.

To streamline this process, developers sometimes store secrets somewhere convenient in plaintext, or hardcode them into the source code while working. Either of these scenarios – not exactly secrets management best practices – can lead to data breaches or compromised systems.

A brief introduction to secret sprawl

The growing number of different tools and cloud environments developers use to do their work has made secrets management more difficult. A 1Password report revealed that 50% of individual contributors in IT or DevOps roles admit they’re storing secrets in more locations than they can count. 25% of companies said their secrets are stored in 10 or more locations.

And while the IT team has traditionally been responsible for managing passwords, IT teams often lack visibility and control over developer secrets like SSH keys and API tokens. This seems to be the norm: approximately 80% of companies surveyed by 1Password said they didn’t manage their secrets well, and 60% have experienced secret leaks. In fact, 75% of developers admitted they had access to sensitive information like a former employers’ infrastructure secrets(!).

Lack of developer-specific toolsets compounds the problem

Why is it so hard for developers to secure secrets like SSH keys and database credentials? Security and productivity are often in tension. One survey found that 73% of developers agree that the work or tools their security team typically requires them to use interfere with their productivity and innovation.

Each cloud provider, application, server, database, or other tool a developer uses typically requires separate authentication – and might require learning specialized tooling for that environment. Authenticating for multiple tools can interrupt workflows, slowing developers down – which can be unacceptable for teams trying to deliver projects on tight deadlines.

As a workaround, sometimes developers store credentials insecurely or take shortcuts to enable faster access. Lacking a secure, productivity-friendly alternative, this is how you end up with hard-coded credentials.

In addition to taking shortcuts, lack of education around proper secrets management has allowed insecure habits to form, including:

- Reusing secrets across projects.

- Using the same secrets in both production and testing/staging.

- Storing secrets in shared or unsecured spreadsheets.

- Sending secrets over email, chat, and text.

- Former employees maintaining access to secrets.

When developers share secrets using unencrypted email messaging apps, manually set up system configurations on their local device to run a program, or manually copy sensitive values to connect to another machine, those secrets are not secure.

Securing shadow IT with secrets management solutions for developers

As we detailed in the last post in this series, a first step to wrangling shadow IT across all of your company’s departments is understanding employees’ responsibilities and workflows. This helps IT and security teams identify not only where employees may be using shadow IT to help them in their jobs, but why they’re using it.

Employees often use shadow IT to improve their productivity – to work around something that’s holding them back from doing their best work, on schedule. This is especially important to understand for the engineering team.

The question is how to secure developer workflows while simultaneously streamlining them. Secure credential management for developers can be trickier than it is among non-developers, because the workflows are more technical, so the fix requires a more bespoke solution. Implementing single sign-on (SSO) as part of an identity and access management (IAM) framework can go a long way to securing non-development workflows, but they don’t typically address developer needs.

The good news is there is arguably more opportunity within developer workflows to secure credentials and reduce friction than with other teams. It’s not particularly convenient for developers to generate SSH keys manually every time, or to store SSH keys on their local drive, or to store plaintext secrets in code. These (insecure) methods are just the way things have always been done – but they’re certainly not without friction.

However, it can be difficult for IT and security admins to know where to start, because they’re less familiar with developer workflows. That being the case, a good first step is to try to understand developers’ unique secrets management use cases. For example, it may be helpful to understand that each developer starts their day with a ‘git pull’, or why they have to google the ssh-keygen command every time they need it (because it’s so complicated).

To find points of friction, pinpoint where developers may be taking shortcuts with secrets management, and where shadow IT may be lurking, it can help to ask questions like:

- How are you storing and sharing secrets?

- Are you running programs/queries on your local device (instead of a secure server?)

- Are you copying and pasting sensitive values to connect to another machine?

- Are you using additional tools or services to increase your productivity or do your job better?

- Are there security policies or processes you feel are slowing down your work?

Once you gather this information, then what? It’s not realistic to try and monitor all the ways developers may be sharing secrets or prevent employees from using shadow IT (you’ll be engaging in an unwinnable game of whac-a-mole). The only practical way forward is to put effective secrets management tools in place so developers can use the platforms they want, but in a secure way.

How do you do that? For starters, look for tools that use automation to eliminate the possibility of human error. That should make it easier to get buy-in too: Developers will never object to removing friction from their workflows, especially when you can automate tedious tasks in the software development lifecycle and lessen their workload.

For developers using SSH keys, for example, you can implement an enterprise password manager (EPM) like 1Password that supports secure secrets management for credentials like SSH keys in a way that fits seamlessly into developer workflows. In addition, an EPM with secrets management features can help developers securely work with API tokens, application keys, and other credentials where they need them – in their terminal and code editor. That means both stronger security and increased productivity.

Learn more about shadow IT

To learn more about shadow IT and how IT teams can adapt to evolving workplace challenges in a hybrid environment, catch up with three previous posts in this series:

- What is shadow IT and how do I manage it?

- Employee productivity and worker burnout, and how they impact shadow IT

- Understanding and securing shadow IT use for HR, finance, and marketing

For a complete overview of the topics discussed in this series, download the eBook, Managing the unmanageable: How shadow IT exists across every team – and how to wrangle it.

Managing the Unmanageable

Learn why teams like finance, marketing, HR, and engineering use shadow IT, the security vulnerabilities that can follow, and how to manage it all.

Download now2024-04-10, 00:00

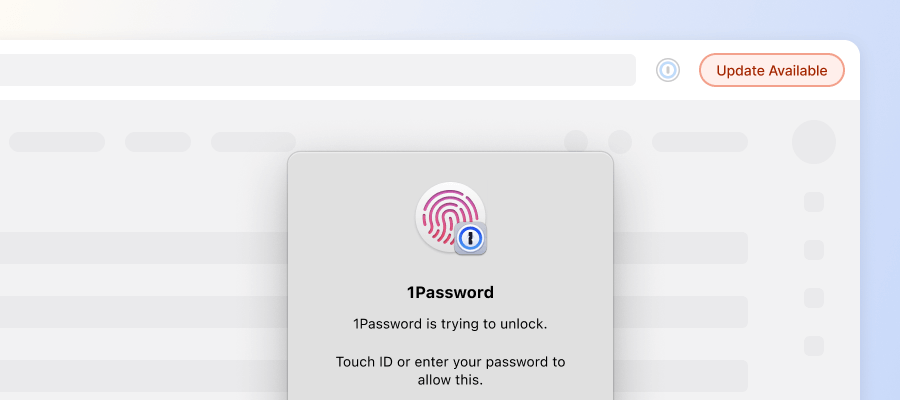

There’s one question our Security team hears more than any other: Is my 1Password data vulnerable if my device is compromised or infected with malware?

A compromised device involves full control or visibility at the system level1, and password managers like 1Password store data that’s accessible to the system — that’s how they function. In fact, that’s how most typical apps are built.

The short answer is: Yes, your secrets are vulnerable to an attacker who’s fully compromised your device, however unlikely that situation may be. And let me be clear that if you’re an everyday internet citizen who browses securely and maintains their devices, worrying about such local threats is probably unnecessary. The longer answer is nuanced, as they so often are, and presents an interesting paradox.

So, let’s explore that paradox, then dig right into local threat protections in 1Password. After our deep dive, I’ll reveal the crucial non-security consideration involved in our threat-mitigation approach, and explain how the 1Password team strikes an incredibly delicate balance.

The challenge

Keeping information safe on your devices is essentially the reason password managers were created in the first place. Your password vault is a much more secure alternative to spreadsheets and word-processing files floating around because your data is encrypted at rest (on your device).

That means the information you store in 1Password is most secure when 1Password is locked. Attacks on your locked data - like guessing your account password or trying to find an unpatched cryptographic flaw – are passive attacks.

But keeping 1Password locked at all times flies in the face of everything else our product is known for: convenience, security on the go, ease of use, adding efficiency to your workflows, and more. It’s also not realistic because as consumers, we typically choose products we can make use of, right?

Well, using 1Password means the possibility of active attacks.

Active attacks occur when malware targets 1Password as the app is running or being unlocked. An attacker can attempt to steal your credentials as you provide them; they can also steal secrets while the app is open and unlocked. Active attacks are the larger concern for our security and development teams. They’re also the hardest to guard against.

And there’s no one-size-fits-all solution. Our approach, for example, is a bit contradictory.

We face a challenge that’s incredibly common throughout our industry: Protections are largely specific to each platform, operating system, and environment because each has its own security boundaries.

Given the varied conditions and guardrails, the protections we can build differ, and depend on the platform and type of threat we’re addressing. We have to exclude many local threats from our threat model for that reason, and often reject related bug bounty reports. We implement platform-specific protections where we can but are often limited by the operating systems themselves.

Yet we always do our best to protect your data from local attacks, and often accept reports of missing local protections we can add without negatively impacting performance, other security considerations, or the customer experience.

The protections

When 1Password is locked, we make sure your vault contents are encrypted so they’re impenetrable, even to someone with root access to the device. We accomplish this with traditional 1Password accounts by storing the secret that’s required to decrypt the vault contents, your account password, in your mind — a location presumably inaccessible to attackers. Accounts protected by SSO and passkeys rely on security features built into the device.

We also do everything possible to protect against same-user privileged access — a term for malware that runs on a computer with the same permissions you have, and lacks the ability to elevate its privileges.

We can prevent such attacks from targeting open and unlocked versions of 1Password so it can steal your information on devices with specific Linux distributions (Wayland), macOS, Android, and iOS. There are protections in place for Windows systems, as well, but Windows apps are protected in such a way that an anti-malware solution is required to protect against processes that try to debug other applications.

While we account for same-user threats, it’s important to acknowledge this kind of malware is always capable of phishing or otherwise misdirecting you to a fake version of 1Password. Let me reiterate that safe browsing and a secure device are always the first lines of defense.

There’s one other category of security threats we take into account as we fortify 1Password: forensic analysis.

Maybe the would-be attacker has physical access to your device or exploits a vulnerability. However it happens, there are plenty of tools available that allow someone to view your secrets if they get their hands on a copy of your drive (disk) or memory (RAM).

To protect your secrets, we prevent your vault contents from ever hitting the disk unencrypted. And when you unlock 1Password the traditional way, the keys to decrypt your data are unavailable via forensics alone — the account password remains with you.

When you use SSO or a passkey to unlock your 1Password account, your vault information is only accessible if the forensics can gather other data to facilitate a successful SSO or passkey authentication, and that depends entirely on your SSO configuration and local storage protections.

We minimize exposure of your secrets in memory by attempting to clear the 1Password apps of sensitive data, and minimizing the amount and types of data in memory while the apps are unlocked. It’s difficult to guarantee absolute clearance when we talk about things remaining in memory, but we aim to maintain the highest possible level of security hygiene.

While these local protections cover a number of threats at the forefront of our threat model, there’s one local threat without any viable defense.

The balance

1Password lacks the ability to protect against an attacker who’s gained full control over a device with administrative or root privileges. But there’s an important fact to acknowledge here: In this case, 1Password is far from unique.

There’s no password manager or other mainstream tool with the ability to guard your secrets on a fully compromised device.

It’s simply a limitation of the operating systems 1Password runs on: There’s no way to isolate an application to sufficiently limit the damage malware is capable of inflicting. An application can be an annoyance, but there’s no amount of annoying that will stop a determined attacker.

At the end of the day, local threats present a number of issues we’re unable to reasonably address. And that’s the very reason we’re forced to exclude them from our threat model and reject many related bug reports. While we’re unable to defend against a full compromise, we use every option available to make it difficult for local threats to access your secrets.

But there’s a critical balance we have to consider: protection and usability. Many mitigations that make the lives’ of local threats more difficult, make your life more difficult, too.

Runtime Application Self-Protection frameworks, for example, would allow us to make even root level attackers suffer. But these third-party products often have serious performance, reliability, and privacy considerations. The implications are serious enough that we’ve decided not to use them.

When security restrictions clash with convenience and we have to make choices, we’ll always choose to give your secrets the best fighting chance. And when that approach is layered with nearly impenetrable cryptography, same-user defenses, and the minimization of secrets in memory, we find ourselves with the deeply secure design of a thoughtfully secured password manager.

Thank you to the following contributors:

- Rick van Galen – Tech Lead, Product Security

- Adam Caudill – Security Architect

-

To clarify, full control of the device is not relegated to physical control, but access with administrative or root privileges. ↩︎

2024-04-09, 00:00

Who’s responsible for regulating technological change in a democracy?

Verity Harding, a globally recognized expert in AI technology and public policy, and one of Time Magazine’s 100 most influential people in AI, thinks anyone – with any level of technological knowledge – can have a valid opinion about AI. After all, it may not be technological knowledge that helps us make the best decisions around how we want to use AI as a society.

Harding, who is currently the director of the AI and geopolitics project at the Bennett Institute for Public Policy and author of the book, AI Needs You, How We Can Change AI’s Future and Save Our Own, talked with Michael “Roo” Fey, Head of User Lifecycle & Growth at 1Password on the Random but Memorable podcast about technology policy and ethics.

To learn more, read the interview highlights below or listen to the full podcast episode.

Editor’s note: This interview has been lightly edited for clarity and brevity. The views and opinions expressed by the interviewee don’t represent the opinions of 1Password.

Michael Fey: Tell me about the book.

Verity Harding: I wanted to make sure I actually added something new to the AI debate, because obviously it can get a bit old and tired sometimes. People have given me lovely feedback that what I have in there is really new.

MF: Actually, before we dig too much into the book, can you give a little background on yourself and what led you to writing something like this?

VH: It’s an odd journey I had to AI. I studied history at university and the earliest part of my career was spent in politics. I was the political advisor to the then Deputy Prime Minister, Nick Clegg, who’s now president at Meta.

It was really my experiences in politics that ended up leading me to technology. I worked quite heavily on a piece of legislation in the UK that was national security related. It was about updating the powers of the security services in the UK for the digital age. Obviously, that’s an extremely controversial and difficult subject, and it was very fraught in the UK with lots of different opinions on whether it was too much overreach from the government.

What it made me realize was that there was this huge deficit in terms of knowledge about technology between the technologists and the political class who are responsible for regulating this technology for society.

“There was this huge deficit in knowledge about technology between the technologists and the political class."

I felt that this gap was not good and that there needed to be more people who could speak both languages – the political language and the technological language. Because of course, technology is extremely political. I eventually ended up joining Google and I was head of security policy in Europe, the Middle East, and Africa (EMEA) and also head of UK and Ireland policy, which was a fantastic experience.

Funnily enough, in the time between me leaving government and joining Google, the Edward Snowden revelations happened. That subject, which was already fraught, became even more fraught. We had to do a lot of work at Google, educating and explaining and helping politicians learn more about what digital privacy, security, human rights, and civil liberties on the internet really meant.

While I was at Google, the company acquired DeepMind, which is a British AI lab. I got to know the CEO and founder, Demis Hassabis, who’s a really visionary and inspirational scientist himself. I learned more from him about AI.

It was clear to me that all of the subjects that I cared most about when it came to technology policy were going to be made immeasurably better or worse by AI, depending on how we managed to navigate it. I wanted to be part of making sure that it went down the better route and not the worst route.

“It was clear to me that all of the subjects that I cared most about when it came to technology policy were going to be made immeasurably better or worse by AI."

I moved to DeepMind and was one of the really early employees there. I co-founded all of DeepMind’s policy and ethics and social science research teams, as well as things like the Partnership on AI, which is an independent, multi-stakeholder organization of tech companies and different businesses and civil society groups and academics looking at the societal impact of AI.

All of this led me eventually to writing this book because I felt that I’d had this really privileged, up-close view and perspective on AI. I wanted to be able to share that more broadly. This book is really everything I’ve learned from all of that experience.

MF: You’ve been part of the AI conversation or a long time. At what point did you start writing this book? Did the launch and popularity of ChatGPT change the trajectory of your book?

VH: It’s true, I’ve been involved in it for a really long time.

What’s so funny is that when I moved from Google to DeepMind to work on AI policy, I was thinking, well, this is going to be a much quieter life. Because at Google we were right in the thick of many news cycles – as I said, the Snowden revelations were causing a huge amount of press coverage.

I also covered other issues at Google, like online radicalization and hate speech that were also getting a huge amount of attention. Going straight from politics into dealing with media stories and being involved in the constant 24/7 news – it’s quite exhausting.

Nobody was talking about AI at all, so I thought, well, this will be a lot quieter and I’ll have time to do the deep thinking and not be fire fighting every day.

Demis offered me the job when he was in the car on the way to fly to South Korea. That’s where AlphaGo happened, which created a huge amount of interest and everything really blew up straight away, so I didn’t ever get that quiet life.

When I started writing the book, I would say that the media coverage and attention around AI had started to dip a little. It was a surprise to all of us in AI that ChatGPT had the effect that it did. We all knew about these capabilities already, but something just connected and hit, and you never can quite tell when that will happen. It brought AI crashing into the limelight.

“ChatGPT brought AI crashing into the limelight."

I had either finished or was very close to finishing the book when that happened. But because I already knew about generative AI, I had written about it quite a lot in the book already. It was something that I was concerned that politicians – and society more broadly – weren’t grappling with.

Before ChatGPT we had already been warning about the possibility for deep fakes to mess with our democracy and undermine truth. We hadn’t seen much response to that, really. So, my book already covered all of those kinds of issues.

I didn’t have to change it much. I did decide to alter it a bit and include more on ChatGPT specifically, just because I think that made it easier to get my argument across. Before, I had to explain from scratch what generative AI is.

It was very helpful that ChatGPT enabled me to have this shorthand that made me pretty sure that anybody who picked up the book would know straightaway what that was.

MF: What are the most pressing concerns or misconceptions people have around AI?

VH: There’s no right and wrong answer about what people should or shouldn’t be concerned about when it comes to AI.

That’s what I say in the book: that everybody will have an opinion and everyone has a right to an opinion. Their opinion is no less or more valid based on the depth of their technological knowledge. And indeed, sometimes technological knowledge won’t help make a decision about whether we’re happy with AI being used in certain aspects of society or not.

I think one common misconception is that, if I don’t understand the deep technology and detailed technological side of AI, then I don’t have a right to have an opinion. I think there’s quite a lot of gate-keeping that happens in AI and it encourages people not to get involved.

“There’s quite a lot of gate-keeping that happens in AI and it encourages people not to get involved."

That’s partly why I wrote the book – to say, in a democracy, you do get to have a say and you can educate yourself to an extent, but you don’t need to be the world’s leading research scientist to be able to have that say.

I also personally find the conversations around AI causing human extinction very unhelpful. I don’t think that that’s an appropriate way to think about this new technology. I think that it tends to obscure some of the more pressing concerns, and it tends to obscure some of the more exciting potential, too.

We’ve ended up in quite an odd position with AI. Back when I started at DeepMind, I was very keen that we would shift the conversation from AI as Terminator, AI as Skynet and towards AI as a tool. The things to be worried about should be more realistic; things like bias and accountability and security and safety. And I think probably the latest hype cycle has not contributed to calm common sense when we’re talking about it.

MF: Is one of the driving factors around the release of this book trying to bring a more stable, measured approach to the conversation?

VH: That wasn’t the motivation. The motivation was really that I felt I had something to contribute, something new to say. The bulk of the book is these examples of transformative technologies of the past.

I think coming from both a history training and a political background, I was very conscious that the tech industry is not known for its humility and likes to think everything it’s doing is the first time anyone’s ever done anything. But while AI is new, invention is not new, and progress is not new, innovation is not new. I really had this hunch that there would be things that we could learn to help guide us with the future of AI.

I feel very strongly that it’s an extremely important and exciting technology. I don’t mean to diminish its importance by saying that I don’t think that it will cause human extinction, but that’s not to lessen the need to pay real attention to its power. I felt that we weren’t looking enough to the past and what we could learn.

I suppose the other motivation was, I really believe in democracy. It’s not necessarily always the most fashionable thing, but I think policymaking is hard graft. It’s difficult and it can be a slog and it can be boring, certainly not the sexiest thing to talk about, but it’s really important.

“We’ve managed great technological change before and I’m really confident that we can do it again."

Someone who read the book said to me just yesterday that they really got a sense from it that AI was important, but they also got a sense that humans were pretty great too. I liked that feedback because hopefully that does come across.

I feel that AI is important and it’s great, but we have done this before. We’ve managed great technological change before and I’m really confident that we can do it again.

Subscribe to Random but Memorable

Listen to the latest news, tips and advice to level up your security game, as well as guest interviews with leaders from the security community.

Subscribe to our podcast2024-04-04, 00:00

This is the third in a series of four posts about shadow IT, including how and why teams use unapproved apps and devices, and approaches for securely managing it. For a complete overview of the topics discussed in this series, download Managing the unmanageable: How shadow IT exists across every team – and how to wrangle it.

Until recently, companies have been able to exert pretty comprehensive control over security and how people work – in an office, at a desk, with a desktop computer, and using company-provided software and servers.

But the days of protecting clearly defined perimeters from the threat of cyber attacks with strong network security and unforgiving firewalls are, for most companies, gone.

Today, thanks to hybrid work, the situation can be very different. Many companies have limited insight into where or how their employees are working. In the park? On a mobile device? Laptop? Using any number of apps and tools? Cybercriminals are taking advantage of the confusion.

This reduced control makes it imperative for information technology (IT) and IT security teams to understand where and why employees are using shadow IT, so they can find ways to protect employees from security threats no matter how or where they work.

Tracking employees’ shadow IT desire paths

Employees typically use shadow IT to be more productive. A great analogy for shadow IT is something called the “desire path” – a term landscape architects use to describe the shortcut footpaths pedestrians carve into public spaces that get them from point A to point B faster than “official” or paved walkways. (You’ve seen them. They’re the dirt paths that cut the corner on the way to the train station or shorten the walk from the parking lot to the playground, through the flower bed.)

Security solutions should secure that desire path. This means understanding departments’ responsibilities and workflows, and where employees may be using shadow IT to help them in their jobs. Don’t expect the paths to look the same, department to department. Shadow IT shows up differently across teams because it’s used to support distinct business operations, roles, and responsibilities.

IT and cybersecurity teams need to operate a bit like detectives to discover employees’ desire paths. You might be surprised to find shadow IT desire paths crisscrossing every department in your company.

Trying to stop the use of shadow IT and forcing employees to stick to the “official path” of company-approved tools isn’t a particularly effective strategy. The most realistic and effective shadow IT security strategy is to secure the desire path for each individual employee, so they can use shadow IT securely.

In other words, to protect against the risk of security breaches, embrace shadow IT – and secure it.

Shadow IT on the finance team

The finance team is typically high on the security team’s list because they literally has the keys to the bank. The finance team handles critical financial data such as the company’s banking credentials, and sensitive information like audit reports and financial reporting.

Sometimes finance employees need to share sensitive documents with external partners like investors, board members, or auditors. And if they do that through insecure channels like email or SMS, it could open the door to unauthorized access.

Typical finance team workflows and responsibilities include:

- Leading financial planning and management, forecasting, and risk management and mitigation

- Optimizing budgets

- Identifying cost-saving opportunities across the company

- Working with the audit committee

- Sharing financial reporting

- Ensuring adherence to compliance standards

With these finance team workflows in mind, where might shadow IT be lurking? Some typical information security vulnerabilities to investigate include:

- Services used often that aren’t supported by SSO, such as bank accounts

- Unencrypted emails or messaging applications used to share data with internal and external teams

Shadow IT on the HR team

The human resources (HR) team handles confidential employee information every day in its efforts to hire, develop, and retain talent for the company. HR also ensures the company is compliant with benefits administration and labor laws. In addition, they focus on creating and implementing employee management strategies, managing training and development programs, and fostering a positive workplace culture.

Typical HR team workflows and responsibilities include:

- Sharing sensitive information about employees with internal and external teams

- Managing the employee lifecycle, including a critical role in onboarding and offboarding

- Using and sharing credentials for recruiting/hiring platforms, employee background checks

Based on these workflows, here are some areas where you may find vulnerabilities due to shadow IT lurking in HR:

- Storage of sensitive employee data, including personally identifiable information (PII)

- Recruiting/hiring platforms or apps

- Employee benefit vendor platforms

- Unencrypted emails sharing confidential data with external vendors or consultants

Shadow IT on the marketing team

The marketing team handles more sensitive data and information than you might expect. This might include campaign spending and reporting data, as well as customer information.

They also are on the front lines of social media and may be using multiple platforms or apps for customer support or top-of-funnel customer acquisition. As the guardians of your company’s brand reputation, it’s critical that marketing’s accounts aren’t compromised.

Typical marketing team workflows and responsibilities include:

- Working with cross-functional teams to generate leads

- Reporting campaign details, such as budget and ROI

- Working with external agencies or freelancers, with whom they often need to share credentials

- Generating and posting marketing and thought-leadership content

Knowing marketing’s responsibilities, it can be useful to check the following for information security risks and shadow IT use:

- Services used often that aren’t supported by SSO or don’t support multiple accounts or logins, such as social media platforms

- Unencrypted emails or messaging apps to share data or credentials across internal and external teams

- Apps for customer relationship management, project management, email marketing, and website analytics, many of which may not be covered by SSO

Securing shadow IT vulnerabilities at the employee level

Once you’ve identified the shadow IT desire paths for each team, then what? In terms of security measures or security tools, it’s most important for security professionals to secure credential sharing, as well as standardizing and securing access to apps and tools.

You can secure authentication, password management, and credential sharing using an enterprise password manager (EPM), which provides teams with a centralized solution to use, access, and share sensitive company data. It’s important that the EPM provides role-based access controls to ensure that users adhere to your company’s cybersecurity policies to defend against data breaches, cyberattacks like ransomware, and social engineering attacks like phishing.

EPMs can help you make the easy way to work the secure way to work. For example, EPMs can autofill time-based one-time passwords (ToTP) in addition to standard passwords. That enables security teams to require multi-factor authentication for providers that offer it, while at the same time streamlining the sign-in flow, rather than adding friction to it.

To learn more about shadow IT and how to secure it to reduce risk of security incidents, stay tuned. Now that we’ve covered what to look for in teams like HR, finance, and marketing, next we’ll discuss the unique needs of developers.

Managing the Unmanageable

Learn why teams like finance, marketing, and HR use shadow IT, the security vulnerabilities that can follow, and how to manage it all.

Download now2024-04-03, 00:00

What’s good for business is often bad for security. That’s the inescapable conclusion of the 1Password State of Enterprise Security Report this year.

Here’s the backdrop, and it should be familiar by now: Work has, slowly and then all of a sudden, expanded. No longer confined to the office ecosystem, work happens in coffee shops and at home and at the airport, on company-provided laptops and the shared computer in the living room, on the family iPad and the phones in our pockets.

All that work leaves a residue of (often sensitive) data as it flows through managed apps like the company productivity suite and unsanctioned apps like the file-sharing service that a handful of people use, unbeknownst to IT.

With the explosion in the number of apps used for work, it’s a good time for employee productivity, and artificial intelligence (AI) has entered the picture to boost output even further. But IT and security teams are struggling to keep up, especially when they’re constrained by limited resources.

In the 1Password report, Balancing act: Security and productivity in the age of AI, we surveyed 1,500 white-collar employees in North America, including 500 security professionals. What emerged from our findings is a tension between productivity and security that has taken on a new urgency.

Let’s start with the growing pressure on employees to be productive.

Risk management suffers in the race for peak productivity

More than a third of workers (34%) use unapproved apps or tools to get things done. This is shadow IT, and its use won’t come as a surprise to security professionals.

But the scale of the problem might. Of that 34% who use shadow IT, each employee uses an average of five unapproved apps or tools. In a company of just 300 employees, that’s more than 500 potential new threat vectors.

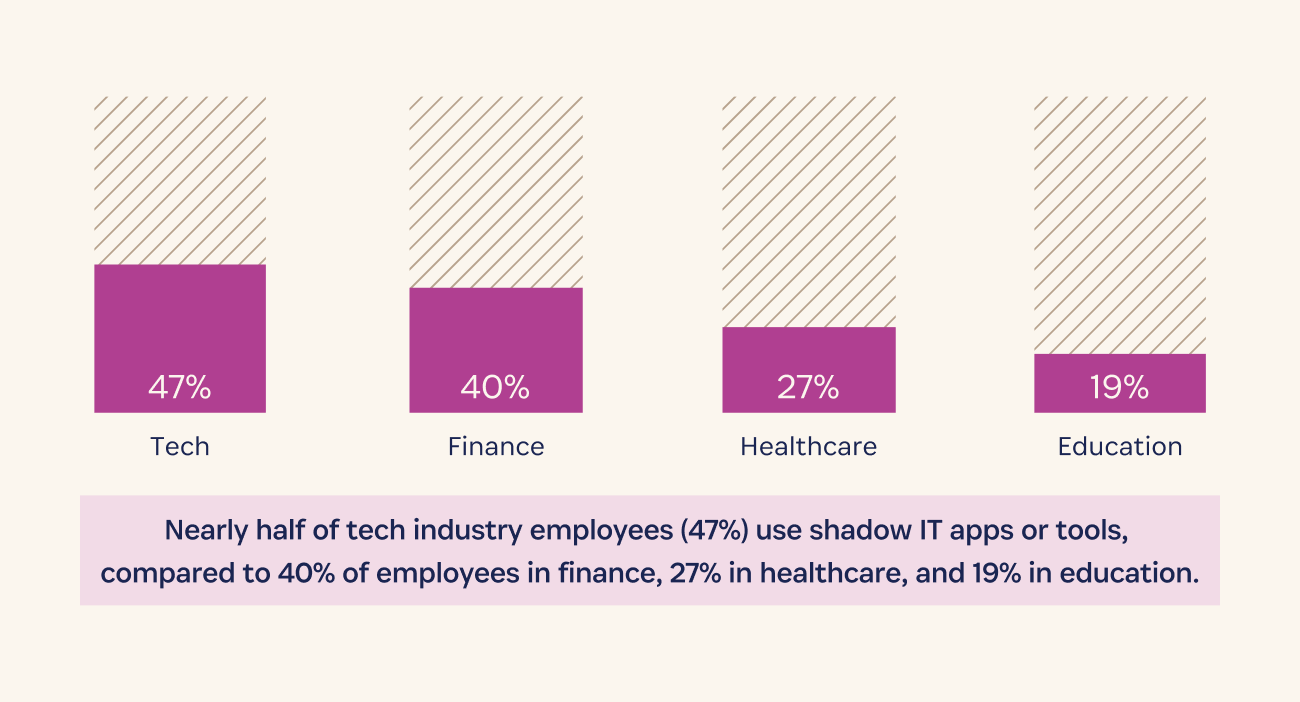

The problem is most pronounced in the tech industry, with nearly half of employees saying they use shadow IT, compared to 40% of employees in finance, 27% in healthcare, and 19% in education.

Security teams are trying to keep up. 92% of security pros say their company requires IT to approve software that’s used for work. But 59% say they have no control over whether employees follow those information security policies.

That visibility is more achievable if employees use only work-provided devices, which 84% of companies say they require of their employees.

But 17% of employees say they never work on a company-provided device, using only personal or public computers for work instead.

Security teams struggle to adapt to a new threat landscape

More than two-thirds (69%) of security pros say they’re at least partly reactive in terms of security risk mitigation. That’s because they’re either pulled in too many directions (61%), don’t have the necessary budget (24%), or are understaffed (21%), among other reasons.

As a result, security teams are worried. When asked what keeps them up at night, 79% of security pros listed inadequate security protections. Among their top concerns: external threats like phishing or ransomware (36%), internal threats like shadow IT (36%), and human error (35%).

Phishing scams, ransomware attacks, and a patchwork system give our security team heartburn. They’re the tireless ninjas keeping the bad guys out, so next time you see them, offer a coffee (or a medal). We’re in this digital battle together.” – IT Security VP, tech hardware company

Focus on productivity opens the door to cybersecurity threats

Understandably, productivity is top of mind for employees. Unsurprisingly, in the pursuit of productivity, security suffers. 54% admit to being lax about their company’s data security policies, with 24% of those saying they’re just trying to get things done quickly.

Despite the well-known vulnerabilities associated with weak or reused passwords, 61% of employees (64% of managers and 53% of non-managers) confess to poor password habits, which increase the risk of data breaches. And half of employees say they slipped up on security in the past year, for example by clicking a link in a suspicious email or sharing credentials for work with people outside the company, making companies more vulnerable to a cyberattack.

This is a scenario seemingly tailor-made for AI to deepen the tension between security and productivity. 57% of employees say using generative AI applications makes them more productive.

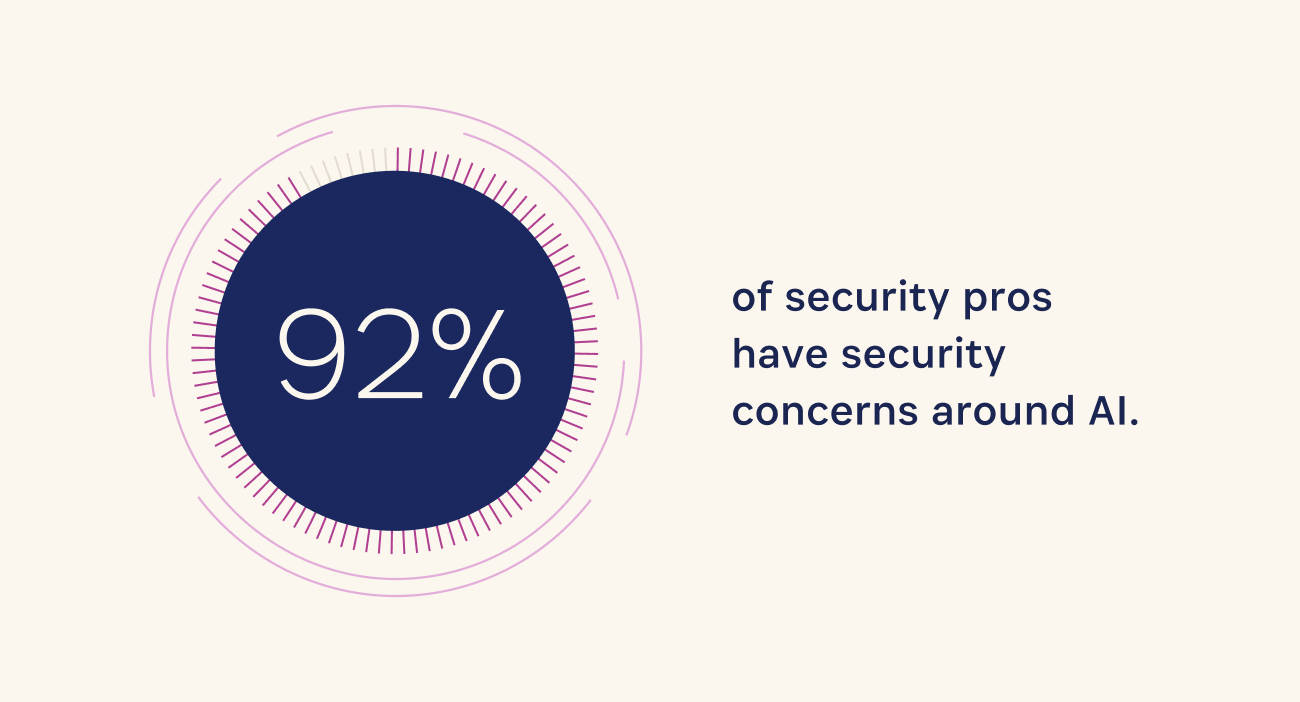

But a full 92% of security pros have security concerns about AI security, citing employees entering sensitive data into the tools, using AI systems that were trained with bad data, or falling for cybercriminals’ increasingly sophisticated phishing attempts powered by AI.

Download the 1Password State of Enterprise Security Report 2024

The delicate balance between productivity and security isn’t new, but the conditions leading to a potential breaking point are. While security teams are struggling to reduce the risk of cybersecurity incidents as workplace habits shift, employees are likewise singularly focused on the pursuit of productivity. Old concerns like the security of authentication methods haven’t gone anywhere, while new concerns only complicate matters.

We’ve only scratched the surface of this year’s report. Download 1Password’s State of Enterprise Security Report for the full breakdown.

Balancing act: Security and productivity in the age of AI

Productivity and security are often in tension. Learn how today’s shifting landscape of hybrid work and AI has affected that tension, and how security professionals and workers are coping.

Download now2024-03-28, 00:00

This is the second in a series of four posts about shadow IT, including how and why teams use unapproved apps and devices, and approaches for securely managing it. For a complete overview of the topics discussed in this series, download Managing the unmanageable: How shadow IT exists across every team – and how to wrangle it.

High productivity levels are generally a good thing. For most organizations, the answer to the question, “Is it important for your employees to be productive?” is a resounding “Yes!” However, when employees ask to use a tool or app to boost productivity, companies may want to say “yes”, but often find themselves saying “no”.

What gives? Security concerns. And they’re legit. Companies are in the midst of experiencing a brave new world called hybrid work. Gone are the days of on-premise servers, software, and devices (and employees) that were relatively straightforward to manage and secure.

Now knowledge workers can get things done in coffee shops and their own living rooms. Companies turn to cloud services to support flexible working with “access from anywhere” apps and online collaboration tools, collectively known as software-as-a-service (SaaS).

Employees have become much more likely to select these cloud services and apps (not all company-approved) to get their work done. While hybrid and remote work was slowly starting to become a thing before, the pandemic accelerated it, and here we are.

So the million-dollar question is: If employees want to use their preferred apps and tools to be more productive, how can companies leverage this employee productivity while still protecting themselves from cybersecurity risks?

And what does worker burnout (the opposite of employee productivity) have to do with the IT department’s security strategy for shadow IT?

Quick review: What is shadow IT?

The first post in this series, What is shadow IT and how do I manage it?, explains what shadow IT is and what it may look like across different company departments.

To recap, here’s a quick definition: Shadow IT refers to the apps and devices that aren’t licensed and managed by a company.

These aren’t obscure apps used for nefarious purposes. Examples of shadow IT can be anything from Google Docs to social media. The issue is that employees may enter company information or client data in them and, if they log in with a weak or reused password, it can cause vulnerabilities that may result in a data breach.

A changing work environment: Securing the new perimeter

This new hybrid, cloud-based work environment and employee experience requires a shift in companies’ security strategy. There are no walls. Instead, security and IT teams are managing a nebulous perimeter that’s constantly shifting and often spans the globe. In The new perimeter: access management in a hybrid world, we highlight four key considerations for securing the new perimeter of a hybrid workforce:

- To address shadow IT, start with identity. 70% of data breaches involved an identity element in 2023. Identity issues, which include stolen passwords, are expected to be even worse in 2024, increasing to as much as 90%, according to Forrester.

- Secure access to managed and unmanaged apps. Any number of employees are using multiple devices to access all sorts of apps and websites during their workday. An enterprise password manager (EPM) ensures that employees use strong passwords no matter what they access and on what device. Companies can set their own minimum security requirements, and the EPM will ensure that every sign-in, on every device, meets those requirements.

- Minimize security stack costs. Single sign-on tools (SSO) are great for managing access to the software and tools IT knows about, but aren’t enough to corral shadow IT. And the costs of putting more apps behind SSO can add up. It takes time for implementation and custom configuration, plus there’s typically an additional charge to place most apps behind SSO (the “SSO tax”).

- Debunk the false tradeoff of workforce productivity versus security. Employee productivity versus security doesn’t have to be an either-or choice. In fact, it can’t be, because it’s a futile exercise to try and stop shadow IT at your organization. It’s everywhere: In one study, 85% of employees said they knowingly broke cybersecurity rules to accomplish a task. Instead, the challenge is to find ways to secure each individual employee’s preferred ways of working.

Full-spectrum shadow IT challenges: Employee productivity to worker burnout

Productive employees. Burned-out employees. At the opposite ends of the spectrum, yet both contribute to the risks of shadow IT at companies everywhere.

At one end, employees are using shadow IT to help them increase productivity levels or do their jobs better. A Gartner survey shows that we’re using twice the number of apps we did in 2019, and use continues growing.

At the other end of the spectrum are employees who are being stretched too thin. And it’s not a few outliers. A 1Password report on burnout revealed that 80% of office workers feel burned out, and one in three workers say burnout is affecting their initiative and motivation levels.

It’s worth noting that this research was conducted during the height of the pandemic, when we’d expect burnout levels to be particularly high – but it’s also worth noting that we haven’t solved burnout since then.

In addition to the obvious physical and mental health effects, worker burnout can present a severe, pervasive, and multifaceted cybersecurity risk. This is because employees who are feeling burned out can be more lax about following security protocols. They also are more likely to use shadow IT. Here are some additional eye-opening findings from the 1Password report:

- 3 times as many burned-out employees as non-burned-out employees maintain that security policies “aren’t worth the hassle” (20% vs. 7%), regardless of incentives.

- A 21-point gap separates those who are burned out (59% of whom say they follow their companies' security rules) from those who are not (80% of whom say they follow the rules).

- 60% more burned-out employees than non-burned-out employees are creating, downloading, or using shadow IT (48% vs. 30%).

- 59% of burned-out employees have poor practices when setting up work passwords, compared to 43% of non-burned-out employees.

Why is this so concerning? In addition to the important concerns about human health and employee well-being, burnout and resulting low levels of employee engagement negatively affects adherence to security protocols.

Bottom line? Nobody wins when an employee is burned out. When workers are so tuned out that they’re less likely to follow security rules, and more likely to use weak passwords or fall for phishing scams, it increases cybersecurity risks.

Cybersecurity team burnout risk

Adding complexity to the challenges of securing the new perimeter, it turns out (surprise!) that IT/security professionals aren’t superhuman. The 1Password report shows that they’re experiencing burnout in even greater numbers than the general employee population (84% vs. 80%).

While 89% of security professionals say they favor security over convenience, they also admit that they take shortcuts. For example, they use shadow IT (29%) or work around company policies to solve their own IT problems themselves (37%) or because they don’t like the company-approved software (15%).

Even more worrying, security professionals are twice as likely as other workers to say that due to burnout, they’re “completely checked out” and “doing the bare minimum at work” (10% vs. 5%).

That’s not good news, especially if a company has a reactive approach to managing shadow IT that depends on the vigilance of team members and their ability to quickly respond to problems.

Take a proactive approach to managing shadow IT

As security professionals know, prevention is often more effective than protection. Taking a proactive approach to managing shadow IT – securely enabling it – is the only viable path forward.

It starts with understanding employee productivity, workflows, and potential security vulnerabilities in every department. A next step is working to secure the “path of least resistance” for all employees at the individual level so they can use the apps and tools they need to boost productivity.

The good news is, by securing credential sharing and standardizing how access to tools happens, you also protect your organization against lax security practices and behaviors.

Next, we’ll explore how to identify shadow IT, what it may be used for (such as project management, social media, productivity tools, and file sharing), and common vulnerabilities for different departments, including Finance, HR, Engineering, and Marketing.

To learn more, follow this series on the 1Password blog exploring shadow IT over the next few weeks or download the ebook: Managing the unmanageable: How shadow IT exists across every team – and how to wrangle it.

Managing the Unmanageable

Learn why teams like Finance, Marketing, and HR use shadow IT, the security vulnerabilities that can follow, and how to manage it all.

Download now2024-03-25, 00:00

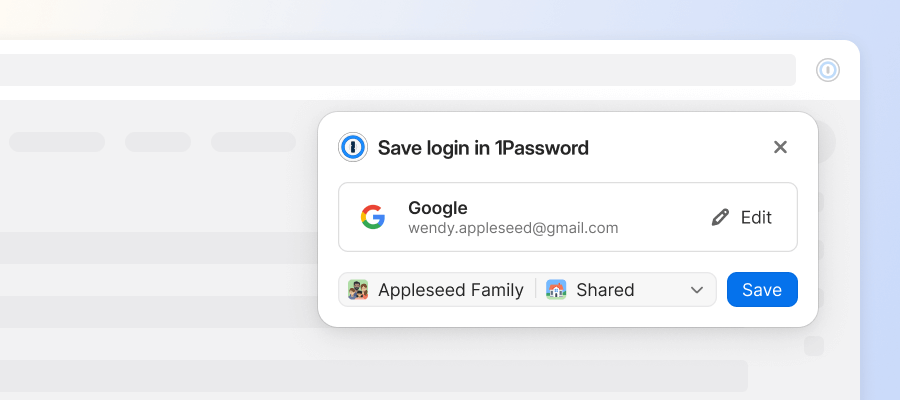

1Password’s Go-to-Market (GTM) team is critical to achieving our mission of helping businesses, families, and individuals protect their passwords and other private information.

GTM helps our company understand the real-life problems that businesses are facing and how 1Password is best equipped to solve them. It’s a fast-growing team and we’re delighted that women like Jess Plowman, Senior Sales Development Representative, and Tiphanie Futu, Sales Enablement Manager, are playing such an integral role in its success.

Curious what it’s like to work in the GTM team at 1Password? Read on to learn about Jess and Tiphanie’s professional journeys, as well as their current role and day-to-day responsibilities.

Jess Plowman, Senior Sales Development Representative

Why did you join 1Password, and how did you end up here?

Back in 2022, I was made redundant from my previous role working as a sales development representative (SDR). I shared my experience on Linkedin and 1Password reached out to see if I would be interested in applying.

After doing my research, learning about the company’s values and meeting the team, I decided t it would be the perfect next step to develop my career. And I’ve never looked back.

What do you enjoy most about your role?

The highlights of my role involve speaking to a diverse range of people on a daily basis, learning about their needs for a password manager and how best I can assist them.

1Password’s culture focuses on development and progression, so I love helping with the onboarding process and watching my colleagues progress in the company and grow their skills. This focus on development and progression also helps me in my personal growth!

If you were interviewing for a role on your team at 1Password, what are your best words of advice?

First of all, I would 100% recommend it! We’re a friendly and welcoming team! The SDR role is a great way to get started in the cybersecurity industry, learn about sales and develop an in-depth knowledge of the product.

Remember to be yourself, be open to learning and ask lots of questions. The role is remote but you’ll never feel alone!

How would you describe your team in three words?

Supportive, hard working and fun!

Tiphanie Futu, Sales Enablement Manager

Why did you join 1Password, and how did you end up here?

In 2022, I was impacted by a round of layoffs like many other people who work in tech. At that time, the company I had been working for helped us and shared our profiles on Linkedin.